|

Voiced by Amazon Polly |

As of early 2026, the global cloud landscape has excelled in simple resource elastic optimization. The defining architecture is no longer just ‘cloud-native’; it is ‘AI-native.’ This shift marks a fundamental inflection point, where infrastructure must not only support massive computation but also actively participate in its own lifecycle. The primary catalyst for this transformation is the ubiquitous adoption of Agentic AI, defined by autonomous systems that can perceive, reason, act, and crucially, self-correct. These agents require infrastructure that is not just scalable but also fundamentally context-aware and resilient at the silicon level. Organizations that successfully navigate this shift treat AI not as a workload but as the fabric that supports the utility itself.

Start Learning In-Demand Tech Skills with Expert-Led Training

- Industry-Authorized Curriculum

- Expert-led Training

1. Redefining the Hardware Stack: AI Factories and Specialized Silicon

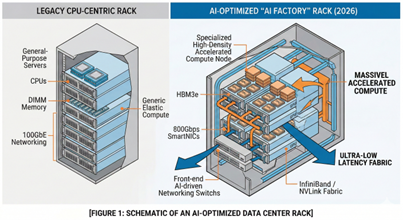

Traditional general-purpose virtual machines are rapidly yielding their status as the default atomic unit of cloud compute. Modern foundation models, particularly those operating at scale in real-time inference, have pushed legacy x86 architectures beyond sustainable cost and power envelopes. In 2026, cloud providers completed the transition to purpose-built hardware clusters, commonly referred to as AI Factories.

These specialized clusters prioritize massive, massive parallel processing. They are composed of specialized GPU/TPU (Graphics Processing Units and Tensor Processing Units) arrays. The definition of ‘ready’ at the hardware layer now includes not only having these accelerators but optimizing the high-speed, low-latency fabrics, like NVIDIA’s Spectrum-4 or advanced Ethernet, that link them, treating thousands of separate chips as a single, unified supercomputer.

Furthermore, custom silicon is now mainstream. Cloud giants like Google (TPU v6), AWS (Trainium 2, Inferentia 3), and Azure (Cobalt and Maia) have deployed their third and fourth generations of custom processors optimized specifically for the transformer architecture and matrix multiplication.

Fig 1: Evolution from CPU‑centric servers to AI‑optimized data center factory architecture.

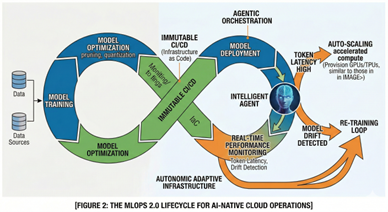

2. Operationalizing Intelligence: The Rise of MLOps 2.0

The operational philosophy that enabled cloud-native scale, DevOps, is proving insufficient for the unique challenges of AI. While DevOps manages immutable code and binaries, AI requires managing mutable data, model decay, hyperparameter configurations, and statistical drift. This gap is bridged by MLOps (Machine Learning Operations). In 2026, MLOps evolved into ‘MLOps 2.0,’ shifting from experiment tracking to automated, production-grade pipelines integrated directly into the continuous integration (CI) and continuous delivery (CD) pipelines.

Infrastructure readiness in an MLOps 2.0 paradigm means establishing an ‘immutable but adaptive’ infrastructure. Using Infrastructure as Code (IaC) is critical, but these configurations must now be dynamic. An AI orchestrator, upon detecting increased model usage or token latency, must be empowered to provision its own underlying accelerated compute, deploy the optimized model weights, and configure the vector networking route, all without human intervention.

Fig 2: MLOps 2.0 lifecycle enabling adaptive, production‑grade AI operations at scale.

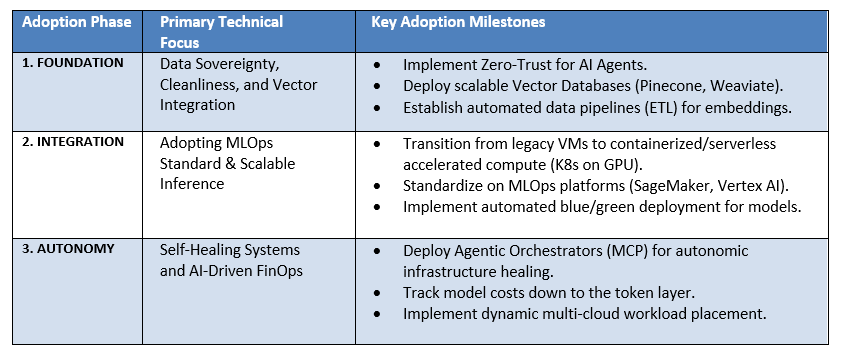

4. How to Adopt AI in Cloud Infrastructure: The Readiness Roadmap

Transforming a legacy cloud environment into an AI-native intelligence ecosystem is a multi-phased technical journey. It requires commitment across organizational boundaries, particularly between IT infrastructure, data engineering, and data science.

Orchestrating Intelligence Takeaways

In 2026, cloud infrastructure is no longer a static utility; it is a living, breathing ‘Thinking Cloud.’ For organizations, treating AI as a mere application layer will inevitably lead to unacceptable latency and uncontrolled costs. Enterprises must adopt an architecture where intelligence is integrated into every layer, from the specialized silicon of the AI factory to the autonomic monitoring systems driven by MLOps 2.0. By prioritizing advanced FinOps, data modernization through RAG and vector databases, and the operational rigor of automated deployment, organizations can transform their cloud environment from a passive cost center into their primary competitive engine.

The bottom line for your business is this: Adopting AI in the cloud isn’t just a software update, it’s a foundation shift. Success in this new era depends on three simple pillars:

- The Muscles: Upgrading to specialized GPU/TPU power.

- The Nerves: Using MLOps to make your deployments fast and automatic.

- The Brain: Implementing FinOps and Vector Data to keep the system smart and affordable.

The future of the cloud isn’t just about “storage” anymore; it’s about autonomous intelligence. Those who build on this “living” infrastructure today will be the ones leading the market tomorrow.

Upskill Your Teams with Enterprise-Ready Tech Training Programs

- Team-wide Customizable Programs

- Measurable Business Outcomes

About CloudThat

WRITTEN BY Pankaj P Waghralkar

Pankaj Waghralkar is a Subject Matter Expert and Microsoft Certified Trainer at CloudThat. He has total of 15+ years of professional experience in various fields like Cloud Computing, Web Development, Digital Marketing and training experience in IT & Computer Engineering streams. He has trained more than 2500 students, working & corporate professionals. He published two national patents on biometric technologies and also published more than 10+ international research articles on different trends in technologies. His expertise includes designing secure hybrid cloud infrastructures and enhancing online visibility through strategic web development and marketing initiatives. Proficient in leveraging advanced cloud technologies, effective networking solutions and comprehensive software engineering practices to drive business growth, Pankaj has trained professionals across industries, helping them master Azure services such as Virtual Networks, Azure Active Directory, Security, Networking and more. Known for his clear teaching style and deep technical knowledge, Pankaj is dedicated to shaping the next generation of cloud experts.

Login

Login

March 24, 2026

March 24, 2026 PREV

PREV

Comments