|

Voiced by Amazon Polly |

Introduction

AI agents have come a long way from simple request-response chatbots. Today, developers expect agents that can listen, think, and respond in real time. That’s exactly what the Strands Agents SDK delivers through its Bidirectional Streaming (BidiAgent) feature.

Strands is an open-source SDK from AWS that simplifies the development of AI agents. Its bidirectional streaming capability takes things further by enabling persistent, two-way audio and text conversations between users and agents, much like talking to a real person. No more waiting for a full response before you can speak again.

Whether you’re building a voice assistant, a real-time customer support bot, or a multimodal AI app, Strands BidiAgent gives you the foundation to do it cleanly and efficiently.

Pioneers in Cloud Consulting & Migration Services

- Reduced infrastructural costs

- Accelerated application deployment

Bidirectional Streaming

Traditional AI interactions follow a simple pattern: you send a message, the model processes it, and you get a response. Bidirectional streaming breaks this pattern by keeping a persistent connection open between the user and the agent.

This means:

- The agent can listen and respond simultaneously

- Users can interrupt the agent mid-response

- Audio, text, and tool execution happen concurrently

- Conversations feel natural and fluid

Strands implements this through the BidiAgent class, which wraps supported models and exposes send() and receive() methods for full control over the conversation flow.

Key Features

- Multi-Provider Support – One of the key strengths of Strands is its flexibility. Instead of locking developers into a single ecosystem, it supports multiple bidirectional streaming providers out of the box:

- Amazon Nova Sonic — An AWS-native solution designed for low-latency, real-time voice interactions, making it ideal for applications already running within Amazon Web Services environments.

- OpenAI Realtime API — Powered by advanced models like GPT-4o, this enables high-quality, real-time conversational experiences with strong reasoning and language capabilities.

- Google Gemini Live — Provides multimodal streaming support, enabling agents to process and respond with a combination of text, audio, and potentially visual inputs.

- Voice Activity Detection – The agent automatically detects when a user begins speaking, enabling smooth and natural interruptions. As soon as new input is detected, it stops the current response, clears the audio buffer, and immediately shifts focus to the new input.

- Concurrent Tool Execution – One of the most powerful capabilities of Strands is its ability to run tools in the background without interrupting the conversation. The agent can answer a question, call an API, and continue streaming audio simultaneously, all within the same interaction.

- Flexible I/0 – Strands provides flexible input and output options, making it easy to adapt the agent for different environments and use cases:

- BidiAudioIO — Enables real-time interaction using your local microphone and speakers, ideal for voice-based applications.

- BidiTextIO — Allows text-based interaction, making it useful for testing, debugging, and development without audio dependencies.

- Custom I/O Channels — Developers can build their own input/output integrations for web applications, backend services, or embedded systems.

- Rich Event System – The

receive()method emits typed events covering connection state, audio output, transcription, tool use, token usage, and errors, giving you full observability into the conversation. - Lifecycle Control – Manual

start()andstop()methods let you control exactly when conversations begin and end, with support for graceful shutdown via a built-instop_conversationtool.

Use Cases

- Voice Assistants – Strands enables real-time voice assistants that support natural, uninterrupted conversations. Users can ask questions, give commands, or interrupt mid-response. These assistants can integrate with APIs and business logic to perform tasks such as scheduling, answering queries, and automating, creating a more human-like and efficient interaction experience.

- Customer Support Bots – Voice-based support bots allow users to speak naturally instead of navigating menus. The agent can fetch data, call APIs, and respond instantly while continuing the conversation. This reduces wait times, improves resolution speed, and enhances customer experience through smooth, real-time interactions.

- Healthcare Intake Systems – Voice-driven intake systems conversationally collect patient information. The agent asks questions, validates responses in real time, and allows corrections naturally. This reduces manual effort, improves accuracy, and creates a more comfortable experience compared to traditional forms.

- Voice-Enabled RAG – Users can query documents or knowledge bases via voice. The agent retrieves relevant information and delivers spoken responses instantly. This makes accessing enterprise or research data faster, more intuitive, and hands-free.

- Live Tutoring and Education – Strands enables interactive tutoring, allowing students to ask questions verbally and receive immediate answers. The agent adapts to the learner’s pace, making education more engaging and personalized compared to static learning methods.

- Accessibility Tools – Voice-first interfaces allow users to interact without typing. The agent supports hands-free control and spoken feedback, improving accessibility for users with disabilities and making applications more inclusive.

Code Examples

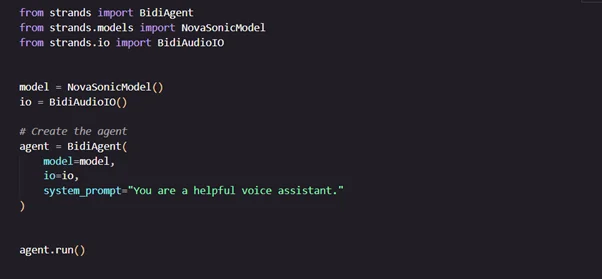

Basic Voice Agent

This example shows how to create a simple real-time voice assistant:

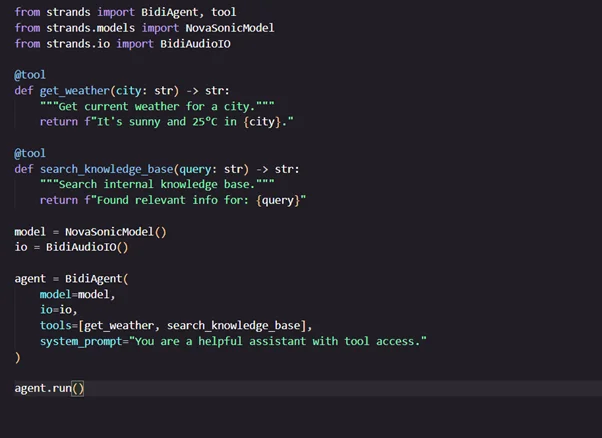

Agent with Tools

Add custom tools to extend your agent’s capabilities:

Conclusion

Strands BidiAgent provides a powerful abstraction for building real-time voice and multimodal AI agents. It handles complex challenges such as persistent connections, interruption handling, concurrent tool execution, and multi-provider integration, allowing developers to focus on creating meaningful user experiences.

Although still experimental, Strands BidiAgent offers a strong foundation and is well worth exploring for real-time AI applications.

Drop a query if you have any questions regarding Strands BidiAgent and we will get back to you quickly.

Empowering organizations to become ‘data driven’ enterprises with our Cloud experts.

- Reduced infrastructure costs

- Timely data-driven decisions

About CloudThat

FAQs

1. Is bidirectional streaming production-ready?

ANS: – Bidirectional streaming in Strands is currently marked as experimental. It works well for prototypes and internal tools, but you should review the latest documentation and test thoroughly before using it in production.

2. Do I need a headset?

ANS: – Yes, if you’re using BidiAudioIO locally. Since audio libraries like PyAudio don’t support echo cancellation, using speakers can create feedback loops. A headset helps ensure clean input and output.

3. Can I use this with my own knowledge base?

ANS: – Absolutely. You can define a custom @tool that queries your knowledge base, such as a vector database or an Amazon Bedrock Knowledge Base, and pass it to the agent. It will be invoked automatically during conversations.

WRITTEN BY Livi Johari

Livi Johari is a Research Associate at CloudThat with a keen interest in Data Science, Artificial Intelligence (AI), and the Internet of Things (IoT). She is passionate about building intelligent, data-driven solutions that integrate AI with connected devices to enable smarter automation and real-time decision-making. In her free time, she enjoys learning new programming languages and exploring emerging technologies to stay current with the latest innovations in AI, data analytics, and AIoT ecosystems.

Login

Login

May 8, 2026

May 8, 2026 PREV

PREV

Comments