|

Voiced by Amazon Polly |

Introduction

Kubernetes is an open-source container orchestration tool that helps in automating operational tasks like deploying, scaling, and managing containerized applications. It tries to maintain the resources in desired state configuration as defined in the manifests.

Pod is the smallest deployable unit in Kubernetes and each pod can contain multiple containers running inside. Each pod gets an Ip based on the CNI plugin installed. Pods are not permanent, and a new pod will be created due to configuration changes or dynamic scaling or node replacement, etc. Hence, we use the service for exposing pods as a network service.

Start Learning In-Demand Tech Skills with Expert-Led Training

- Industry-Authorized Curriculum

- Expert-led Training

Kubernetes Service

Service identifies the pods based on the labels and it will have a unique URL across the cluster. Services can be exposed by a specification called type setting with,

- ClusterIP: It is the default setting, and provides a private virtual Ip inside the cluster

- NodePort: It exposes the cluster IP on a specific port on all worker nodes

LoadBalancer: In the backend, it uses ClusterIP and NodePort and expects to create a load balancer from cloud providers using a service controller. It can add extra features based on the type of load balancer like in AWS it includes DDOS protection, SSL termination, WAF Service, etc

Following is the service manifest file for type LoadBalancer

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

apiVersion: v1 kind: Service metadata: name: app namespace: dev spec: type: LoadBalancer selector: app.kubernetes.io/name: app ports: - port: 80 targetPort: 8080 protocol: TCP |

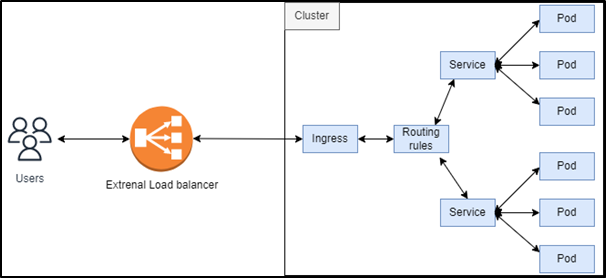

If we have multiple endpoints to be exposed externally, then we can use a separate load balancer for each service that increases the infrastructure’s complexity and cost. This is solved by ingress which along with services will provide access to pods.

Ingress

Ingress is one of the in-built Kubernetes API objects which exposes the services externally. It includes a set of rules for routing traffic. Each rule will include a host, path, and backend to where traffic needs to be routed if matched with rules. It may be configured for SSL termination and load balancing. You can define a default

A basic ingress definition will be as follows

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

apiVersion: networking.k8s.io/v1 kind: Ingress metadata: name: app-ingress annotations: nginx.ingress.kubernetes.io/rewrite-target: / spec: ingressClassName: nginx rules: - http: paths: - path: /foo pathType: Prefix backend: service: name: app |

By default no ingress controller will be installed, we need to install it separately. There are ingress controllers provided by popular cloud providers and open-source popular ingress controllers like the nginx ingress controller. AWS provides AWS Load Balancer Controller.

AWS Load Balancer Controller

This approach uses an external load balancer that routes traffic directly to the pods via service. Target groups are configured and can be IPs or instances.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 |

apiVersion: networking.k8s.io/v1 kind: Ingress metadata: name: ingress namespace: dev annotations: alb.ingress.kubernetes.io/load-balancer-name: ingress alb.ingress.kubernetes.io/target-type: ip alb.ingress.kubernetes.io/scheme: internet-facing alb.ingress.kubernetes.io/healthcheck-path: /health spec: ingressClassName: alb rules: - http: paths: - path: /app1 pathType: Prefix backend: service: name: app1 port: name: 8000 - path: /app2 pathType: Prefix backend: service: name: app2 port: name: 8080 |

Annotations can be used in the ingress file to provide information for the ingress controller like load balancer name, IP (pods are directly registered) or instance as target type, internal or internet facing, etc

For the ingress objects, the target groups and listening rules will be configured for ALB. The default ingressClassName for AWS Load balancer controller is alb. A default listener rule will be created for 404 fixed responses. You can group multiple ingress resources to be handled by a single load balancer by specifying the group using annotation alb.ingress.kubernetes.io/group.name, and the order can be specified using annotation alb.ingress.kubernetes.io/group.order

The controller can be specified to watch a single namespace or all namespaces. Currently, it does not support multiple namespaces. Natively adds HTTPS to HTTP redirection, WAF support, and authentication.

Nginx Ingress Controller

In this approach, we use the nginx ingress controller which is an in-cluster internal reverse proxy for layer 7. It routes the traffic from outside to the internal resources according to the configurations. An extra layer is added inside the cluster for nginx controller pods exposed by a service that takes responsibility for routing traffic. The installations and configurations of the controller should be taken care of by cluster operators.

The default nginx backend will give a 404 error or you can configure controller.defaultBackend or using annotation nginx.ingress.kubernetes.io/default-backend

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 |

apiVersion: networking.k8s.io/v1 kind: Ingress metadata: name: ingress namespace: dev spec: ingressClassName: nginx rules: - http: paths: - path: /app1 pathType: Prefix backend: service: name: app1 port: name: 8000 - path: /app2 pathType: Prefix backend: service: name: app2 port: name: 8080 |

Multiple ingress resources can be merged but without using group or order as used in AWS Load Balancer Controller. The default ingress class name will be nginx.

Conclusion

As we have seen AWS Load Balancer Controller provides a highly available and elastic way and offloads its infrastructure burden from the cluster itself. Nginx Controller gives more flexibility, but it needs to be maintained and run inside the cluster which may increase some operational workloads. Based upon our requirement for less operational workload vs more flexibility we can choose the right controller or even we can use multiple controllers for different use cases.

Upskill Your Teams with Enterprise-Ready Tech Training Programs

- Team-wide Customizable Programs

- Measurable Business Outcomes

About CloudThat

FAQs

1. Which is the default load balancer type created for service type LoadBalancer in EKS?

ANS: – By default, it provisions Classic Load Balancer which is considered legacy ELB now. If you need a specific type of ELB you can specify using annotation service.beta.kubernetes.io/aws-load-balancer-type: nlb

2. How to specify AWS Load Balancer Controller to use watch only a particular namespace?

ANS: – You can give the value whatchNamespace to watch only one namespace or not specify to watch all namespaces.

WRITTEN BY Dharshan Kumar K S

Dharshan Kumar is a Research Associate at CloudThat. He has a working knowledge of various cloud platforms such as AWS, Microsoft, ad GCP. He is interested to learn more about AWS's Well-Architected Framework and writes about them.

Login

Login

January 3, 2023

January 3, 2023 PREV

PREV

Comments