- Consulting

- Training

- Partners

- About Us

x

“Try clearing your cache” is one of the most common solutions we hear about during a computer crisis. But what exactly is ‘Caching.’

TABLE OF CONTENT |

| 1. Introduction to Caching |

| 2. Cache Evictions |

| 3. Use Cases Of Caching |

| 4. AWS ElastiCache |

| 5. Use Cases of AWS ElastiCache |

| 6. Conclusion |

| 7. About CloudThat |

| 8. FAQs |

Caching means frequently storing demanded things closer to those asking for them. The book “Algorithm to Live By” explains caching with a library scenario. Suppose we need to read a book and it would take a week to complete it. We cannot make daily travel to the library to access the book. We would borrow it for a week or buy it entirely and keep it on our desk to have daily access. Similarly, when we keep frequently accessed data in memory much faster than our Database, it is known as Caching. The art of caching helps us on a daily-to-daily basis to increase performance and provide a better user experience in all the applications. Browser Caching helps to cache components of a website, which reduces the websites’ load time. To shorten the page load time, the browser caches most of the content on a web page, saving a copy to the device’s hard drive. The browser stores these files until their time-to-live (TTL) expires or until the hard drive cache is full. Users also have the control to clear the Cache.

Eviction refers to how old, relatively unused, or excessively voluminous data can be dropped from the Cache, allowing the Cache to remain within a memory budget. Cache Evictions are also required whenever there is a significant update or new entries to the databases. Cache Evictions can be accomplished by using multiple methods. Some of them are:

There are many more cache eviction policies that can be selected for related use cases. Eviction policies are significant and can increase performance by a considerable margin. We know what caching is and some policies to evict Cache. Let us understand how Caching can be done in AWS and what benefits are there if we introduce Caching in our application.

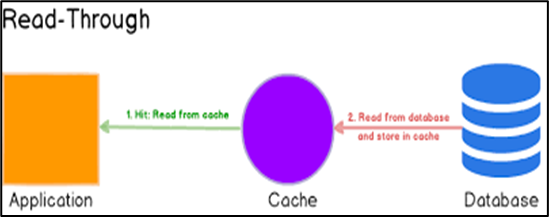

Suppose we create an application that makes a call to our DB through our backend code for retrieving profiles of actors and actresses worldwide. Some actors have a massive fan following and the number of calls to retrieve their profile will be huge. Now, every time a call is made to the database, it must fetch the results, which would strain our DB. What if we somehow store this actor’s profile in temporary memory and retrieve it from there every time a call is made. This would increase our application performance by a vast margin and lower the cost of DB. In such use cases, we take the help of Caching. There are many types of Caching, such as:

Image Source: https://images.app.goo.gl/q3fRvUm4MCRiQ3YbALet us now learn about Amazon’s cloud service for caching: ElastiCache.

Image Source: https://images.app.goo.gl/q3fRvUm4MCRiQ3YbALet us now learn about Amazon’s cloud service for caching: ElastiCache.

Amazon Web Services provide us with a caching technology to be used in our applications. ElastiCache is a fully managed, in-memory caching service that accelerates application and Database performance or as a primary data store for use cases that don’t require durability like session stores, gaming leaderboards, streaming, and analytics.ElastiCache is compatible with both Redis and Memcached.

In the stated diagram of Caching, our Node Application on EC2 uses ElastiCache to cache data that is frequently required not to call the DB for every request

Image Source: https://images.app.goo.gl/Lf7dgKVehvdP1TFY9

Now let us discuss the strategy of Lazy Loading in AWS ElastiCache. AWS ElastiCache is an in-memory key-value store that sits between our application and its related databases. Take the above architecture into consideration where a node app is supposed to update and retrieve data from the DocumentDB, and we have AWS ElastiCache sitting in between. Every time the node app needs to retrieve data from DB, it first looks at the Cache, and if the data exists, it takes it from there. And it is a Cache hit. If the data does not exist in the Cache, it retrieves it from the DB and then stores it in the Cache, and it is a Cache miss.

There is a write-through strategy where it adds data or updates data in the Cache whenever data is written to the Database. We can add a TTL strategy to save our Cache from being stale and minimize wasted space as there would be data that is never read. Bottom-line is that caching makes the application’s performance much faster.

CloudThat is the official AWS Advanced Consulting Partner, Microsoft Gold Partner, and Training partner helping people develop knowledge on cloud and help their businesses aim for higher goals using best in industry cloud computing practices and expertise. We are on a mission to build a robust cloud computing ecosystem by disseminating knowledge on technological intricacies within the cloud space. Our blogs, webinars, case studies, and white papers enable all the stakeholders in the cloud computing sphere.Feel free to drop a comment or any queries that you have regarding Caching, AWS ElastiCache, cloud services, and we will get back to you quickly. To get started, go through our Expert Advisory page and Managed Services Package that is CloudThat‘s offerings.

|

Voiced by Amazon Polly |

Our support doesn't end here. We have monthly newsletters, study guides, practice questions, and more to assist you in upgrading your cloud career. Subscribe to get them all!

Comments