|

Voiced by Amazon Polly |

Overview

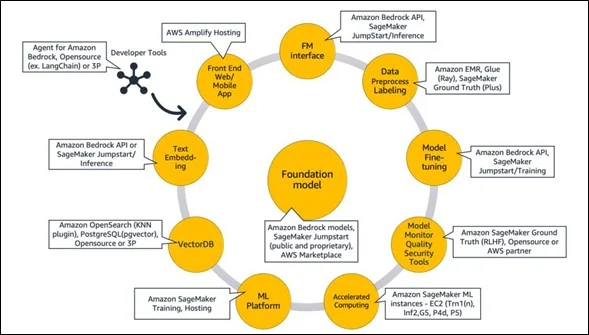

Generative AI models are widely used across industries, but most of them are designed to be general-purpose. While this makes them versatile, it also limits their ability to provide domain-specific, accurate, and consistent responses. To address this limitation, AWS provides fine-tuning capabilities through Amazon Bedrock, enabling customization of foundation models such as Amazon Nova.

Pioneers in Cloud Consulting & Migration Services

- Reduced infrastructural costs

- Accelerated application deployment

Introduction

Foundation models are trained on large-scale datasets and can perform a wide range of tasks such as text generation, summarization, and question answering. However, these models lack awareness of specific business contexts, internal processes, or domain-specific terminology.

For example, a generic AI model may provide broad responses to customer queries, but it may not understand product-specific details or pricing logic relevant to a particular business. This is where fine-tuning becomes useful.

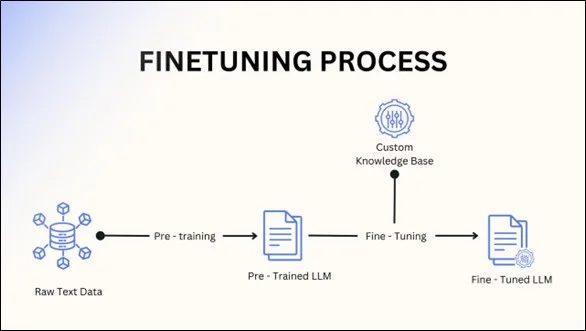

Fine-tuning is the process of training a pre-existing model using a curated dataset that reflects specific requirements. With Amazon Nova models available in Amazon Bedrock, AWS offers a managed approach to fine-tuning, eliminating the need for infrastructure management and reducing implementation complexity.

In simple terms, fine-tuning transforms a general-purpose model into a specialized system that produces more relevant and accurate outputs.

Understanding Amazon Nova and Fine-Tuning

Amazon Nova is a family of foundation models available within Amazon Bedrock. These models support various use cases, including conversational AI, text generation, and other generative tasks. They are designed to be scalable and suitable for enterprise-level applications.

Fine-Tuning

Fine-tuning involves training a base model using labeled input-output pairs. The objective is to teach the model how to respond in specific scenarios based on provided examples.

Example:

Input: Customer wants to sell a used iPhone 13

Output: Suggested price range ₹30,000–₹35,000

By training on multiple such examples, the model learns patterns, response styles, and business-specific logic.

How Fine-Tuning Works in Amazon Bedrock

The fine-tuning process in Amazon Bedrock follows a structured workflow:

- Select a Base Model

Choose an appropriate Amazon Web Services (AWS) instance type based on your requirements, including performance and cost considerations.

- Prepare the Dataset

Create a dataset consisting of input-output pairs. This step is critical, as the quality of the dataset directly impacts the performance of the model.

- Configure and Start the Training Job

Upload the dataset and configure parameters such as epochs and batch size. AWS manages the training process.

- Generate a Custom Model

After training is completed, a customized version of the model is created.

- Deploy and Use the Model

The custom model can be accessed via APIs and integrated into applications.

Types of Fine-Tuning

Supervised Fine-Tuning (SFT)

This approach uses labeled datasets in which both the input and the expected output are provided. It is the most used method and provides a high level of control over model behavior.

Reinforcement Fine-Tuning (RFT)

In this approach, the model learns from feedback rather than from explicit, labeled outputs. It is useful in scenarios where collecting labeled data is difficult and iterative improvements are required.

When to Use Fine-Tuning?

Fine-tuning should be applied based on specific requirements.

Suitable Scenarios

- When responses must follow domain-specific knowledge

- When consistency in tone and output format is required

- When business logic needs to be embedded into the model

Unsuitable Scenarios

- When prompt engineering can achieve acceptable results

- When there is insufficient training data

- When cost constraints are significant

Cost Considerations

Fine-tuning introduces additional costs that must be considered before implementation.

Training Cost

Charges are based on the number of tokens processed during the training phase.

Storage Cost

The customized model is stored and billed accordingly.

Inference Cost

Custom models often require provisioned throughput, which may result in charges even when the model is not actively used.

Careful evaluation of cost versus benefit is necessary before proceeding with fine-tuning.

Use Cases

Fine-tuning can be applied across multiple domains:

- Chatbots: Improving response accuracy using domain-specific datasets such as FAQs and product information.

- E-commerce: Enabling personalized recommendations and pricing strategies.

- Customer Support: Training models on company policies and historical support data to improve resolution quality.

- Document Processing: Extracting structured insights from domain-specific documents.

Conclusion

Fine-tuning Amazon Nova models using Amazon Bedrock provides a structured way to customize AI systems for specific business needs. It enables organizations to move beyond generic outputs and build applications that are more accurate, consistent, and context-aware.

However, fine-tuning requires careful planning, particularly in terms of dataset preparation and cost management. It is not always necessary and should be used when there is a clear requirement for customization.

As organizations continue to adopt generative AI, the ability to effectively customize models will be a significant factor in building reliable, scalable solutions.

Drop a query if you have any questions regarding Amazon Nova and we will get back to you quickly.

Empowering organizations to become ‘data driven’ enterprises with our Cloud experts.

- Reduced infrastructure costs

- Timely data-driven decisions

About CloudThat

FAQs

1. What is Amazon Nova in Amazon Bedrock

ANS: – Amazon Nova is a family of foundation models provided by AWS within Amazon Bedrock, designed for generative AI tasks such as text generation and conversational applications.

2. Do I need machine learning expertise to perform fine-tuning?

ANS: – No, Amazon Bedrock provides a managed environment that simplifies the fine-tuning process. However, understanding data preparation is important.

3. Is fine-tuning always required for AI applications?

ANS: – No, many use cases can be handled using prompt engineering. Fine-tuning is only required when higher accuracy and domain-specific responses are needed.

WRITTEN BY Akanksha Choudhary

Akanksha works as a Research Associate at CloudThat, specializing in data analysis and cloud-native solutions. She designs scalable data pipelines leveraging AWS services such as AWS Lambda, Amazon API Gateway, Amazon DynamoDB, and Amazon S3. She is skilled in Python and frontend technologies including React, HTML, CSS, and Tailwind CSS.

Login

Login

April 30, 2026

April 30, 2026 PREV

PREV

Comments