|

Voiced by Amazon Polly |

Overview

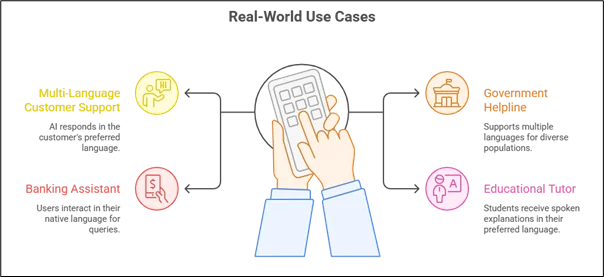

Voice is quickly becoming the most natural way we interact with technology. From calling customer support to speaking with virtual assistants, people prefer talking over typing, especially in regions where multiple languages are spoken daily. But building a system that understands and responds instantly in different languages is not simple.

In this blog, we’ll walk you through how to design a real-time multi-language voice-to-voice AI system on AWS using the Sonic model. And just to clarify, by multi-language, we mean the system supports multiple languages (like English, Hindi, Gujarati, Tamil, etc.) independently. It is not translating from one language to another. If the user speaks Hindi, the system responds in Hindi. If they speak English, it responds in English.

Let’s break it down step by step.

Pioneers in Cloud Consulting & Migration Services

- Reduced infrastructural costs

- Accelerated application deployment

Why Voice-to-Voice Instead of Traditional Pipelines?

Most traditional voice systems follow this flow:

- Speech → Convert to Text (ASR)

- Text → Process with LLM

- Text → Convert back to Speech (TTS)

While this works, it has some drawbacks:

- Higher latency

- Loss of tone and emotion

- Complex architecture (multiple services stitched together)

- Language-specific tuning at each stage

This is where the Sonic model changes the game.

Sonic works directly from voice input to voice output. It understands spoken audio and generates speech without requiring separate speech-to-text and text-to-speech systems.

The result?

- Faster responses

- More natural conversations

- Cleaner architecture

- Better real-time performance

What Makes Sonic Ideal for Multi-Language Systems?

Sonic supports multiple languages natively. That means:

- It can detect the language from speech.

- It can continue the conversation in that same language.

- It maintains conversational context across turns.

This makes it perfect for countries like India, where users may speak different regional languages depending on preference.

Instead of building separate ASR and TTS pipelines per language, you rely on a unified voice intelligence layer.

High-Level Architecture on AWS

Now, let’s design this system on AWS in a practical way.

- Client Layer

This is where the user interacts with the system. It could be:

- A web application

- A mobile app

- A call center integration (via SIP, Twilio, or Amazon Connect)

The client captures microphone input and streams audio in small chunks.

- Real-Time Streaming Layer

For real-time conversation, we need continuous communication between the client and server.

We use:

- Amazon API Gateway (WebSocket API) for bi-directional streaming

- Or a container-based streaming service running on Amazon ECS or Amazon EKS

The client sends small audio chunks (e.g., every 20–50 ms). This reduces latency and allows incremental processing.

- AI Processing Layer (Sonic Model)

This is the core of the system.

You can host Sonic in two ways:

- Amazon Bedrock (if available as a managed model)

- Custom deployment on Amazon EKS/Amazon ECS with GPU instances

When audio arrives:

- Sonic processes the stream in real time.

- It understands context.

- It generates voice output.

- The response is streamed back immediately.

No separate ASR. No separate TTS. Just voice-to-voice.

- Session and Context Management

Conversations are rarely one question long. Users ask follow-ups.

So we need memory.

We can use:

- Amazon DynamoDB for storing session state

- Amazon ElastiCache (Redis) for low-latency active session storage

Each session can store:

- Session ID

- Detected language

- Conversation context

- Timestamps

For example:

Session ID: abc123

Language: Hindi

Context: User asked about loan eligibility

This ensures smooth multi-turn conversations.

How Multi-Language Support Works?

There are two main design approaches.

Approach 1: Single Multi-Language Sonic Model

If Sonic supports multiple languages in one instance:

- Automatically detect language from the first few seconds.

- Lock that language for the session.

- Continue the conversation in that language.

This is the simplest and most scalable approach.

Approach 2: Language-Specific Model Routing

If performance optimization is required:

- Use a lightweight language detector.

- Route audio to language-specific Sonic instances.

- Maintain session consistency.

This approach can reduce model load per instance.

Keeping It Real-Time

Latency is everything in voice systems.

If the user speaks and waits 2 seconds for a reply, it feels unnatural.

Target latency:

- Response should begin within 300–500 ms.

To achieve this:

- Use GPU-backed instances.

- Enable streaming inference.

- Avoid cold starts (keep containers warm).

- Deploy infrastructure in the same AWS region as users.

- Use efficient audio codecs like Opus.

Real-time streaming makes the system feel human.

Scaling the System

If you expect hundreds or thousands of concurrent calls, you need scalability.

Horizontal Scaling

- Auto-scale Sonic containers based on GPU usage.

- Use Elastic Load Balancer.

- Deploy across multiple Availability Zones.

Session Handling

Each active voice session consumes compute. So:

- Track active sessions.

- Implement timeouts.

- Clean up inactive connections.

Security Considerations

Voice conversations often contain sensitive information.

To secure the system:

- Use TLS encryption for all streaming.

- Deploy models inside private VPC subnets.

- Encrypt stored data using AWS KMS.

- Apply IAM-based access control.

- Define retention policies for audio logs.

If building for enterprise or banking, audit logging is essential.

Monitoring and Observability

A production system must be monitored.

Track:

- Average response latency

- Active sessions

- Model processing time

- GPU utilization

- WebSocket connection health

Use:

- Amazon CloudWatch for metrics

- AWS X-Ray for tracing

- Alerts for latency spikes

This helps maintain reliability.

Cost Considerations

Main cost drivers:

- GPU compute (Amazon EKS/Amazon ECS)

- Amazon API Gateway WebSocket usage

- Data transfer

- Amazon DynamoDB operations

- Amazon Bedrock model invocations (if managed)

Cost optimization tips:

- Use auto-scaling

- Compress audio streams

- Monitor idle sessions

- Use right-sized GPU instances

Common Challenges

- Background Noise

Solution: Apply noise suppression before streaming.

- Language Switching Mid-Session

Solution: Detect language once and lock it per session.

- High GPU Costs

Solution: Smart auto-scaling and load balancing.

- Network Fluctuations

Solution: Implement reconnection logic with session IDs.

Conclusion

Building a real-time multi-language voice-to-voice AI system on AWS is no longer futuristic, it’s practical and achievable.

With the Sonic model handling direct voice interaction and AWS providing scalable infrastructure, you can create:

- Natural conversations

- Low-latency responses

- Support for multiple languages

- Enterprise-grade reliability

The key is designing for streaming, scalability, and session intelligence from day one.

Voice is becoming the primary interface for digital systems. And systems that can understand and respond naturally in multiple languages will define the next generation of AI-powered experiences.

Drop a query if you have any questions regarding Sonic model and we will get back to you quickly.

Empowering organizations to become ‘data driven’ enterprises with our Cloud experts.

- Reduced infrastructure costs

- Timely data-driven decisions

About CloudThat

FAQs

1. How is a voice-to-voice system different from a chatbot with speech support?

ANS: – A traditional chatbot with speech support converts speech to text, processes the text, and then converts the response back to speech. A voice-to-voice system like Sonic directly processes audio input and generates audio output. This reduces latency, preserves speech nuances, and simplifies the architecture.

2. Does multi-language mean translation between languages?

ANS: – No. In this system, multi-language means the AI supports multiple languages independently. If a user speaks Hindi, the system responds in Hindi. If the user speaks English, it responds in English. It does not translate from one language to another unless explicitly designed to do so.

3. How does the system know which language the user is speaking?

ANS: – The Sonic model can automatically detect the spoken language from the initial audio input. Alternatively, you can integrate a lightweight language detection layer before routing the audio to the appropriate model instance.

WRITTEN BY Modi Shubham Rajeshbhai

Shubham Modi is working as a Research Associate - Data and AI/ML in CloudThat. He is a focused and very enthusiastic person, keen to learn new things in Data Science on the Cloud. He has worked on AWS, Azure, Machine Learning, and many more technologies.

Login

Login

March 13, 2026

March 13, 2026 PREV

PREV

Comments