|

Voiced by Amazon Polly |

In today’s digital-first world, customer interactions happen everywhere- chat windows, emails, social media posts, and increasingly, voice calls. Businesses have long relied on sentiment analysis to understand how customers feel, but traditional approaches have focused almost entirely on text. While useful, this method is no longer sufficient. Human emotion is not just expressed through words, but through tone, pitch, pace, and emphasis. A polite sentence delivered with frustration can carry a completely different meaning than the same sentence spoken with enthusiasm. This is where sentiment analysis must evolve, beyond words. This article explains the challenges of using a single model and how multi-model approaches are useful for sentiment analysis with AWS services.

Start Learning In-Demand Tech Skills with Expert-Led Training

- Industry-Authorized Curriculum

- Expert-led Training

The Limits of Text-Only Sentiment Analysis

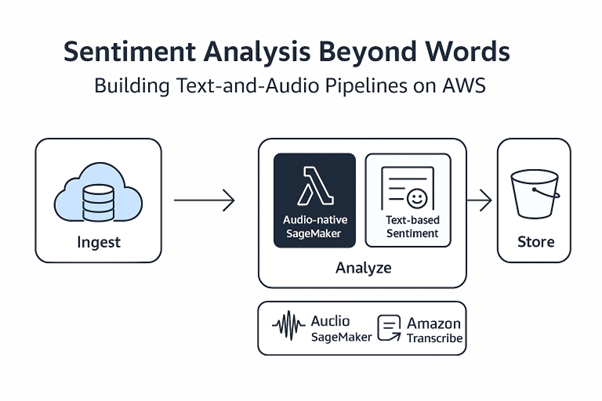

Text-based sentiment pipelines are well established and easy to deploy. On AWS, a typical setup involves converting speech to text using Amazon Transcribe and then applying sentiment classification with services like Amazon Bedrock. These systems are scalable, integrate seamlessly with analytics platforms, and work well for straightforward use cases.

However, text-only sentiment analysis struggles with ambiguity. Sarcasm, irony, and emotional undertones are often lost when speech is reduced to text. Even advanced large language models can misclassify sentiment when emotional cues are embedded in how something is said rather than what is said. As customer interactions become more complex and emotionally charged, these limitations become more apparent.

Listening to Emotion: Audio-Native Sentiment Analysis

Organizations are exploring audio-native sentiment analysis to overcome these limitations. Instead of relying on transcripts, audio-native models analyse raw speech signals directly. Models such as HuBERT and Wav2Vec extract embeddings that capture prosodic features, such as intonation, rhythm, and stress- key indicators of emotional state.

These models perform very well in scenarios where the same words are spoken with different emotions, such as calm versus angry delivery. However, when conversations become longer or more linguistically diverse, audio-only models may lose accuracy. This provides an important insight: a single-model approach is not effective for tailored sentiment analysis.

Fig 1: Hybrid AWS pipeline combining audio and text for advanced sentiment analysis.

The Power of Multimodal Sentiment Analysis

The most effective solution is not choosing between text and audio but combining both. Multimodal sentiment analysis combines lexical understanding of text with emotional signals from audio to create a more complete picture of customer sentiment.

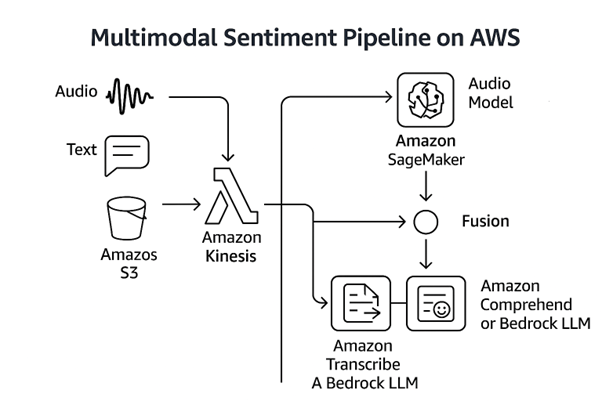

On AWS, such a pipeline can be built using streaming and serverless services. Amazon Kinesis ingests real-time audio and text data, while AWS Lambda routes the data through parallel processing paths. One path performs audio-native sentiment inference using models hosted on Amazon SageMaker. The other path transcribes speech with Amazon Transcribe and applies text-based sentiment analysis using Bedrock or Comprehend. The results are then stored in services like Amazon S3 or Amazon Redshift for reporting, dashboards, and deeper analysis.

Fig 2: AWS multimodal pipeline combining audio and text for advanced sentiment analysis.

Fusing Text and Audio for Better Accuracy

A key architectural decision in multimodal sentiment systems is fusion, how to combine text and audio signals. Late fusion uses separate models for text and audio and then merges their outputs using a meta-classifier. Early fusion combines text and audio embeddings into a single model. AWS SageMaker supports both approaches, offering flexibility in training, deployment, and scaling, while Amazon Bedrock allows rapid experimentation with different foundation models.

Data, Governance, and Skills Matter

Technology alone is not enough. High-quality, diverse data is critical, covering multiple languages, accents, noise conditions, and speaking styles. Evaluation must go beyond overall accuracy to include per-class metrics and performance across different customer segments. Compliance and privacy must be built into the pipeline from day one.

Building such systems also requires teams to understand both the science of emotion and the mechanics of cloud deployment. This is where structured learning and hands-on enablement become important. Across the AWS ecosystem, specialized training providers and consulting partners support organizations and practitioners in developing skills in services such as Amazon Bedrock, SageMaker, and real-time data pipelines. There are several organizations that help companies and individuals bridge the gap between cloud architecture and practical implementation, supporting teams as they build, deploy, and operationalize AI-driven workloads on AWS.

Future of Sentiment Analysis

Sentiment analysis is evolving from a text-centric capability into a more human-aware intelligence system. As customer interactions increasingly happen through voice and real-time conversations, relying on a single modality limits how accurately emotion and intent can be understood. Multimodal sentiment analysis- combining linguistic context from text with emotional cues embedded in speech- offers a more faithful representation of how customers truly feel.

AWS enables this evolution through scalable, modular services that support both experimentation and production-grade deployment. When paired with strong data governance, inclusive datasets, and robust evaluation practices, these architectures allow organizations to move beyond surface-level sentiment scores toward deeper, actionable insights. Ultimately, the organizations that succeed will be those that learn to listen holistically- not just to the words customers use, but to the emotions carried in their voices.

Upskill Your Teams with Enterprise-Ready Tech Training Programs

- Team-wide Customizable Programs

- Measurable Business Outcomes

About CloudThat

WRITTEN BY Vijayanand K V

Vijayanand K V is a Senior Research Associate at CloudThat, specializing in Machine Learning. With 4 years of experience in Machine Learning, he has trained over 1000 students to upskill in Machine Learning, Deep Learning, and Generative AI. Known for simplifying complex concepts with hands-on practical, helping students to use technologies to develop creativity, he brings deep technical knowledge and practical application into every learning experience. Vijayanand's passion for learn everyday reflects in his unique approach to learning and development.

Login

Login

March 25, 2026

March 25, 2026 PREV

PREV

Comments