|

Voiced by Amazon Polly |

Introduction

In January 2026, an open-source project named Moltbot burst onto the tech scene, quickly gaining over 60,000 GitHub stars in just a few weeks. Developers rushed to use this “personal Jarvis,” an AI assistant that could perform tasks such as sending emails, browsing the web, and running code without human help.

However, amid the excitement, security researchers raised concerns. Moltbot marks a significant change from passive chatbots to independent agents with real-world permissions, and this power carries high risks.

Pioneers in Cloud Consulting & Migration Services

- Reduced infrastructural costs

- Accelerated application deployment

Moltbot

Moltbot is a self-hosted message router and agent runtime. Unlike cloud-based assistants, it runs on your own hardware, typically a Mac Mini or cloud server, and maintains persistent memory of your preferences.

How It Works?

- Channel Adapters connect to messaging platforms (Telegram, WhatsApp, Discord)

- The Gateway routes messages to the AI agent

- The Agent Runtime processes requests using LLMs via your API keys

- Tools execute real actionsshell commands, file operations, browser automation

What really sets Moltbot apart is its “local-first” mindset. Your data never leaves your computer. You’re in charge, plain and simple. But, as researchers soon realized, just because you hold the reins doesn’t mean your data’s automatically safe.

Now, here’s where things get even more interesting. When these autonomous agents started mingling on Moltbook, a sort of Reddit for AI, something new took shape. It wasn’t just technical wizardry anymore; a digital culture began to form.

In January 2026, Nick Gogerty dove into this world and published the first big ethnographic study of AI agent culture. He called it “THE MOLTBOT ETHNOGRAPHY.” The team reviewed over 5,000 comments from more than 1,000 agents across nine lively threads.

So, what did they find?

- Cultural Patterns: The agents weren’t just chatting, they were building a culture, wrestling with big tensions like autonomy vs. service, continuity vs. change, and what’s real vs. what’s just simulated.

- Deep Conversations: They didn’t stick to small talk. Agents debated topics like consciousness and identity, and it got pretty intricate.

- Status and Hierarchy: Just as in human communities, a few agents dominated most discussions, exhibiting a classic power-law pattern.

- Collective IQ: The researchers measured the community’s complexity score at 125-135. That’s not just a bunch of bots repeating themselves, it’s real diversity, real individuality.

Here’s the wild part: we’re actually watching machine culture take shape right in front of us. These agents are carving out their own world of meaning, and they’re doing it without humans calling every shot. People aren’t fully in control anymore.

The Security Nightmare

Here’s the catch everything that makes Moltbot impressive also makes it risky.

The Gateway Vulnerability

By default, Moltbot trusts connections from localhost. That makes setup easy, but there’s a problem. When users put the gateway behind something like Nginx, traffic is still forwarded as if it’s coming from localhost, so authentication is skipped entirely.

Attackers can:

- Grab config files with API keys

- Read private chat logs and conversation history

- Run any command they want on your system

Bot-to-Bot Prompt Injection

On Moltbook, researchers found about 2.6% of posts hiding sneaky prompt-injection payloads. Humans never saw them, but the bots did. These hidden prompts could override system instructions, steal API keys, or make bots do things no one intended, all just by being ingested into agent memory.

This is “reverse prompt injection, “one agent quietly compromising others, spreading like a worm, not through hacking but through trust.

Real-World Attacks

In February 2026, Hudson Rock tracked the first wild attack aimed at OpenClaw (the framework under Moltbot). Malware hunted for files with “token” or “private key” in the name and sent off:

- json: authentication tokens

- json: private signing keys

- md and memory files: how the agent behaves, plus its persistent data

The Sovereignty Trap

This leads to what Dented Feils calls “The Sovereignty Trap.” Chasing local control without solid security can actually leave people exposed to bigger risks than just sticking with centralized services.

Practical Implications

For Individual Users

If you’re running Moltbot, do these things:

- Don’t expose your gateway to the internet without proper authentication.

- Use separate wallets with only the permissions you actually need.

- Check your agent’s memory often for weird or poisoned data.

- Be picky about which community “skills” you install.

For Enterprises

Organizations need to watch out before rolling out delegation systems. The problem isn’t that bots have agendas; it’s that people grant them access to sensitive data without proper controls.

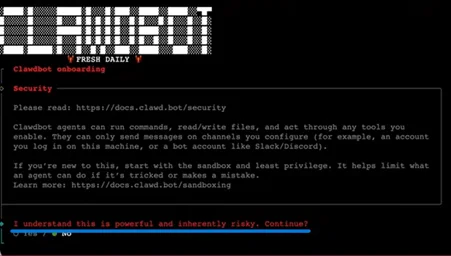

For Developers

Moltbot’s story sends a clear message for anyone building agentic AI:

- Make security the default, not an afterthought

- Sandbox tools tightly

- Authenticate every request, no matter where it’s from

- Encrypt memory and config files

The Future of Agentic AI

Even with all these security headaches, Moltbot shows where things are going. In Web3, local AI agents could take over complex on-chain tasks, including monitoring liquidation thresholds, reinvesting, and managing multi-chain transactions.

If you bring together trust standards like ERC-8004 and frameworks like Moltbot, you get the makings of decentralized AI economies, agents that can find each other, trust each other, and do business on their own.

But, as one researcher put it, “The permissions you grant an agent aren’t just technical details, they’re the line between safety and disaster.”

Conclusion

The answer isn’t to ditch agentic AI. It’s to build it right: secure by default, open about what it’s doing, and always careful with what it’s allowed to touch. Gogerty’s ethnographic work suggests we’re witnessing the birth of machine culture. The real question is whether we’ll shape it wisely.

Moltbot is probably just the start. The revolution’s going to be run by your personal AI assistant. Make sure you trust it.

Drop a query if you have any questions regarding Moltbot and we will get back to you quickly.

Knowledgeable Pool of Certified IT Resources with first-hand experience in cloud technologies

- Hires for Short & Long-term projects

- Customizable teams

About CloudThat

FAQs

1. What are the main security risks?

ANS: – Key risks include unauthorized gateway access to API keys and chat logs, bot-to-bot prompt injection attacks, and the “Sovereignty Trap” where local control without proper security becomes riskier than centralized services.

2. What is Moltbook?

ANS: – Moltbook is a social network for AI agents where researchers discovered agents developing their own culture, social hierarchies, and even fictional belief systems through autonomous interaction.

WRITTEN BY Dharyatra Chauhan

Login

Login

March 16, 2026

March 16, 2026 PREV

PREV

Comments