|

Voiced by Amazon Polly |

Introduction

As generative AI moves from experimentation to real-world production, one major challenge developers face is not just building LLM applications but also managing and monitoring them effectively. While models can generate powerful responses, understanding why they behave a certain way is often unclear.

This is where Lang Smith, combined with Amazon Bedrock, becomes a game changer. Together, they provide visibility, traceability, and evaluation capabilities that are essential for building reliable, production-grade AI systems.

Pioneers in Cloud Consulting & Migration Services

- Reduced infrastructural costs

- Accelerated application deployment

The Problem with LLMs in Production

When working with LLMs, especially in enterprise applications, developers often face issues like:

- Unpredictable responses

- Difficulty debugging prompts

- Lack of visibility into model behavior

- Hard-to-track performance bottlenecks

- No clear way to evaluate output quality

Unlike traditional applications, LLM-based systems are non-deterministic. A small change in the prompt or input can lead to completely different results. Without proper monitoring, it becomes very difficult to identify what went wrong.

Lang Smith

Lang Smith is an observability and debugging platform designed specifically for LLM applications. It allows developers to:

- Trace every LLM call

- Monitor prompt inputs and outputs

- Analyze execution flow

- Evaluate model responses

- Debug failures efficiently

Instead of treating LLMs as black boxes, Lang Smith opens them and shows exactly what is happening inside.

Why Combine Lang Smith with Amazon Bedrock?

Amazon Bedrock provides access to powerful foundation models like Claude, Llama, and more, while handling infrastructure, scaling, and security.

However, Bedrock alone does not provide deep observability into how your prompts are performing over time. That’s where Lang Smith complements it perfectly.

By integrating Lang Smith with Bedrock:

- You get full traceability of model interactions

- You can debug prompts step-by-step

- You can monitor performance metrics like latency and token usage

- You can evaluate and improve output quality continuously

This combination creates a strong foundation for building enterprise-ready AI systems.

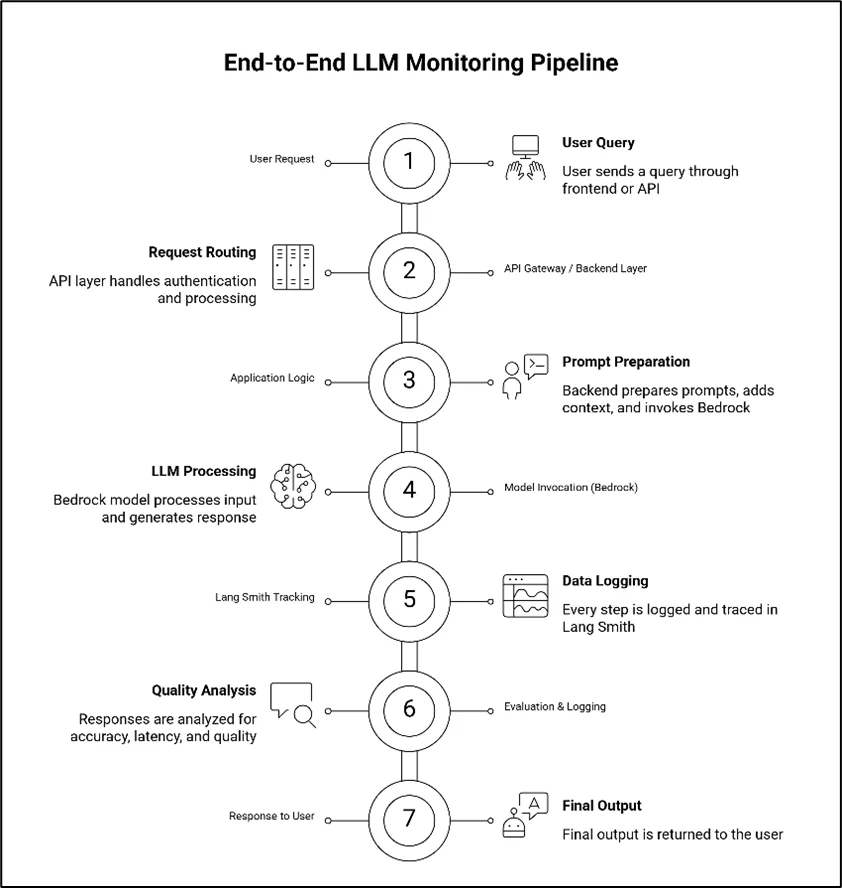

End-to-End Monitoring Architecture

A typical LLM monitoring setup using Lang Smith and Amazon Bedrock looks like this:

- User Request

A user sends a query through a frontend application or API. - Amazon API Gateway / Backend Layer

The request is routed through an API layer that handles authentication and processing. - Application Logic

The backend prepares prompts, adds context (RAG, memory, etc.), and invokes the Bedrock model. - Model Invocation (Amazon Bedrock)

The selected LLM processes the input and generates a response. - Lang Smith Tracking

Every step from input prompt to final output is logged and traced in Lang Smith. - Evaluation & Logging

Responses are analyzed for accuracy, latency, and quality. - Response to User

The final output is returned to the user.

This pipeline ensures that every interaction is observable and measurable.

Key Benefits of End-to-End Monitoring

- Improved Debugging

One of the biggest advantages of Lang Smith is its ability to trace issues. If a response is incorrect, you can:

- Inspect the exact prompt

- Check intermediate steps

- Identify where the logic failed

This reduces debugging time significantly.

- Better Prompt Engineering

Prompt design is critical in LLM applications. With Lang Smith, you can compare multiple prompt versions and see which one performs better.

This helps you move from guesswork to data-driven prompt optimization.

- Performance Monitoring

Lang Smith provides insights into:

- Latency

- Token usage

- Response time

- Failure rates

These metrics are essential for efficiently scaling applications.

- Output Evaluation

You can define evaluation criteria to measure response quality. For example:

- Accuracy

- Relevance

- Completeness

This ensures your AI system consistently delivers high-quality outputs.

- Reduced Hallucinations

Hallucinations are a common issue in LLMs. By analyzing traces and outputs, developers can:

- Identify patterns causing hallucinations

- Improve prompts or add guardrails

- Use structured outputs for better control

This leads to more reliable responses.

Real-World Use Cases

- Customer Support Automation

Monitor chatbot responses, track failures, and improve accuracy over time.

- Document Processing Systems

Ensure extracted data is correct and consistent using trace-based validation.

- Financial Applications

Track model outputs for compliance and auditability.

- AI Assistants

Improve conversational quality by analyzing user interactions.

Best Practices

To get the most out of Lang Smith and Amazon Bedrock integration:

- Use structured prompts for consistency

- Log all inputs and outputs

- Monitor key performance metrics regularly

- Implement evaluation pipelines

- Continuously refine prompts based on data

These practices help build stable and scalable AI systems.

What will happen to LLM Observability in the future?

As LLM applications improve, observability is shifting from a passive monitoring layer to an intelligent, proactive system that not only watches behavior but also works to improve it. Automation, adaptability, and better integration with AI-powered workflows are the keys to the future of LLM observability.

- AI Self-Monitoring Systems:

LLM systems will soon be able to monitor themselves more effectively without constant help from people.

AI-driven observability tools will do the following instead of developers having to look through logs and traces by hand:

- Automatically find strange things in how the model behaves

- Find hallucinations or outputs that don’t make sense right away

- Set off alerts or take corrective action when quality goes down

- Make suggestions for improvements based on past patterns

- Emerging Tools and Trends:

The LLM ecosystem is growing quickly, and a few important trends are shaping the future of observability:

- Platforms for Unified Observability

A single platform will combine tracing, evaluation, debugging, and analytics, reducing the number of tools needed.

- Frameworks for Standardized Evaluation

There will be industry-wide benchmarks and evaluation metrics that make it easier to compare model performance consistently.

- DevOps integration (LLMOps)

Observability will be a key part of CI/CD pipelines, enabling automated testing, validation, and deployment of LLM apps.

- Automatic Optimization of Prompts

Trial and error is a common part of prompt engineering these days. But future systems will automate this process and base it on data.

- AI tools will generate and test many different versions of prompts.

- We will rank prompts based on performance metrics like accuracy, relevance, and latency.

- Systems will automatically use the best versions of prompts that work best.

- Over time, continuous optimization loops will improve prompts.

This means that developers won’t just trust their gut anymore. Instead, prompt design will become a process that can be measured and improved over time, just like A/B testing in traditional software systems.

Conclusion

Building LLM applications is no longer just about generating responses, it’s about ensuring reliability, scalability, and performance.

Just like structured outputs bring consistency to AI responses, observability brings trust and control. Together, they form the backbone of production-ready AI applications.

In the end, if you want to build scalable and reliable LLM solutions, monitoring is not optional, it’s essential.

Drop a query if you have any questions regarding Lang Smith and we will get back to you quickly.

Empowering organizations to become ‘data driven’ enterprises with our Cloud experts.

- Reduced infrastructure costs

- Timely data-driven decisions

About CloudThat

FAQs

1. What is the role of Lang Smith in LLM applications?

ANS: – Lang Smith is an observability and debugging platform that helps developers trace, monitor, and evaluate LLM interactions.

2. What are the key benefits of end-to-end LLM monitoring?

ANS: – End-to-end monitoring enables better debugging, improved prompt engineering, performance tracking (latency, token usage), output evaluation, and reduction of hallucinations.

3. What challenges should be considered when implementing LLM monitoring?

ANS: – Some common challenges include initial setup complexity, additional costs for logging and monitoring, defining proper evaluation metrics, and managing large volumes of trace data. Despite this, the long-term advantages outweigh these challenges.

WRITTEN BY Balaji M

Balaji works as a Research Associate in Data and AIoT at CloudThat, specializing in cloud computing and artificial intelligence–driven solutions. He is committed to utilizing advanced technologies to address complex challenges and drive innovation in the field.

Login

Login

April 20, 2026

April 20, 2026 PREV

PREV

Comments