|

Voiced by Amazon Polly |

Overview

Artificial Intelligence (AI) applications have become mission‑critical for many organizations. From recommendation engines and fraud detection to conversational bots and computer vision systems, AI workloads often operate continuously and directly impact business revenue and user trust. Any prolonged downtime, data loss, or model inconsistency can lead to significant financial and reputational damage.

In cloud environments like Amazon Web Services (AWS), disaster recovery (DR) is not just about infrastructure availability, it must also account for data pipelines, trained models, feature stores, inference endpoints, and orchestration workflows. This blog explores comprehensive disaster recovery strategies specifically tailored for AI applications running on AWS, covering architectural patterns, services, and best practices.

Pioneers in Cloud Consulting & Migration Services

- Reduced infrastructural costs

- Accelerated application deployment

Understanding Disaster Recovery for AI Workloads

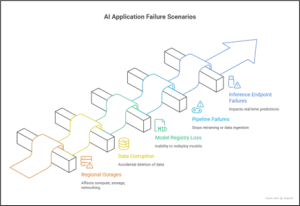

Disaster recovery refers to the ability of a system to recover from failures such as region outages, data corruption, accidental deletions, security incidents, or large‑scale service disruptions. For AI applications, disaster recovery (DR) planning is more complex because it involves multiple layers:

- Data layer: Raw data, processed datasets, feature stores, and labels

- Model layer: Training artifacts, model binaries, checkpoints, and versions

- Pipeline layer: ETL/ELT jobs, training workflows, and CI/CD for ML

- Serving layer: Real‑time or batch inference endpoints

- Monitoring and governance: Logs, metrics, lineage, and audit trails

Traditional disaster recovery (DR) strategies, such as backups and replication, still apply, but AI systems require additional considerations, including model reproducibility, data drift, and training continuity.

Key disaster recovery (DR) Metrics: RPO and RTO

Before designing a disaster recovery (DR) strategy, it is essential to define two metrics:

- Recovery Point Objective (RPO): The maximum acceptable data loss measured in time (e.g., last 5 minutes of training data).

- Recovery Time Objective (RTO): The maximum acceptable downtime before the system must be restored.

For example, a real‑time fraud detection system may require near-zero RPO and very low RTO, while an offline recommendation training pipeline may tolerate higher values. These metrics guide architectural choices and cost trade‑offs.

Data Layer Disaster Recovery

Multi‑Availability Zone (Multi‑AZ) Design

Most AWS data services, such as Amazon S3, Amazon DynamoDB, and Amazon Aurora, are inherently Multi-AZ. Storing raw data, processed datasets, and features in these services provides high durability and availability by default.

- Amazon S3 offers 11 nines of durability and automatically replicates data across multiple AZs.

- DynamoDB supports Multi‑AZ replication and on‑demand backups.

- Amazon Aurora replicates data across AZs with automatic failover.

This setup protects against single‑AZ failures with minimal effort.

Cross‑Region Replication

For region‑level disasters, cross‑region replication is essential:

- Amazon S3 Cross‑Region Replication (CRR) ensures that training data and artifacts are copied to a secondary region.

- Amazon DynamoDB Global Tables provide multi‑region active‑active replication for feature stores or metadata.

- AWS Backup can centrally manage and automate cross‑region backups for supported services.

This approach significantly reduces RPO for critical AI data assets.

Model Artifacts and Registry Recovery

AI models are core intellectual property. Losing trained models can mean weeks of retraining.

Centralized Model Storage

Store model artifacts in Amazon S3 with:

- Versioning enabled

- Server‑side encryption

- Lifecycle policies for cost optimization

Using Amazon S3 versioning allows recovery from accidental deletions or overwrites.

Model Registry with Replication

If using Amazon SageMaker Model Registry:

- Export model artifacts and metadata to replicated Amazon S3 buckets

- Maintain infrastructure‑as‑code (IaC) definitions for redeploying models

For custom registries, store metadata in Amazon DynamoDB Global Tables or Amazon Aurora Global Database to ensure cross‑region availability.

Training Pipeline Disaster Recovery

Stateless and Reproducible Training

Design training pipelines to be reproducible and stateless:

- Use code stored in Git repositories

- Parameterize training jobs using configuration files

- Store intermediate checkpoints in Amazon S3

This enables restarting training jobs in another region without manual intervention.

Orchestration Resilience

For orchestration tools:

- AWS Step Functions can be redeployed in a secondary region using IaC tools like CloudFormation or Terraform

- Amazon MWAA (Managed Airflow) environments should back up DAGs and metadata regularly

By quickly redeploying orchestration logic, training workflows can resume with minimal downtime.

Inference and Serving Layer disaster recovery (DR)

Multi‑AZ Endpoints

For real‑time inference using Amazon SageMaker endpoints:

- Deploy endpoints across multiple AZs

- Enable auto‑scaling to handle traffic spikes during failover

This protects against infrastructure failures within a region.

Multi‑Region Inference

For high‑availability AI applications:

- Deploy inference endpoints in multiple regions

- Use Amazon Route 53 health checks and latency‑based routing

In case of a regional failure, traffic is automatically routed to the healthy region, achieving low RTO.

Batch Inference Recovery

Batch inference jobs can be restarted easily if:

- Input data is stored in replicated Amazon S3 buckets

- Job configurations are defined as code

This approach keeps batch processing resilient and repeatable.

Feature Store Disaster Recovery

Feature stores are critical for consistency between training and inference.

- Amazon SageMaker Feature Store stores offline features in Amazon S3 and online features in Amazon DynamoDB.

- Enable Amazon DynamoDB backups and, if required, Global Tables

- Replicate offline feature data using Amazon S3 CRR

This ensures that features remain available and consistent during failover scenarios.

CI/CD and Infrastructure as Code

Infrastructure as Code (IaC)

Using IaC tools like AWS CloudFormation, CDK, or Terraform is essential for disaster recovery (DR):

- The entire AI infrastructure can be recreated in another region

- Configurations remain consistent and auditable

CI/CD Pipelines

Store pipeline definitions in version control and ensure build artifacts are stored in replicated Amazon S3 buckets or Amazon ECR with cross‑region replication enabled.

This allows rapid redeployment of AI services after a disaster.

Monitoring, Logging, and Observability

Without observability, disaster recovery (DR) efforts may fail silently.

- Amazon CloudWatch for metrics and alarms

- AWS CloudTrail for auditing and recovery analysis

- Centralized logging with cross‑region replication

Set alarms for data ingestion failures, model endpoint health, and pipeline errors so that recovery actions can be triggered automatically or manually.

Conclusion

Disaster recovery for AI applications in AWS goes beyond traditional backup and restore mechanisms. It requires a holistic approach that covers data, models, pipelines, inference, and governance.

As AI continues to power critical business functions, investing in disaster recovery strategies is no longer optional, it is a fundamental requirement for sustainable and reliable AI operations in the cloud.

Drop a query if you have any questions regarding Disaster recovery and we will get back to you quickly.

Empowering organizations to become ‘data driven’ enterprises with our Cloud experts.

- Reduced infrastructure costs

- Timely data-driven decisions

About CloudThat

FAQs

1. Is disaster recovery really necessary for small or early-stage AI applications?

ANS: – Yes. Even if your AI system is small today, recovery planning prevents future chaos. Early-stage systems often evolve into business-critical platforms. Designing with DR in mind from the beginning avoids expensive re-architecture later.

2. What is the biggest mistake teams make in AI disaster recovery?

ANS: – The most common mistake is backing up data but ignoring model reproducibility. If you cannot recreate your training environment, dependencies, and feature logic, restoring raw data alone will not restore your AI system.

3. How often should AI models be backed up?

ANS: – Model artifacts should be versioned automatically after every successful training run. Instead of thinking in terms of “backup frequency,” focus on automated versioning and immutable storage.

WRITTEN BY Modi Shubham Rajeshbhai

Shubham Modi is working as a Research Associate - Data and AI/ML in CloudThat. He is a focused and very enthusiastic person, keen to learn new things in Data Science on the Cloud. He has worked on AWS, Azure, Machine Learning, and many more technologies.

Login

Login

March 13, 2026

March 13, 2026 PREV

PREV

Comments