- Consulting

- Training

- Partners

- About Us

x

In recent times, we have seen a rise in the volume of data used by the IT industry for analytics, processing, etc. The traditional way of storing the data is using a relational database and an on-premises server. However, this type of setup will handle limited requests because of limited infrastructure capabilities.

Data nowadays is becoming dynamic, unstructured, and rapidly changing. So, to fulfill this kind of data required, we can use a no-SQL database. We use programmable languages to query your data instead of using SQL queries for the CRUD (Create, read, update, delete) actions. Hence the No-SQL Database is in sudden demand due to its lightness and capabilities required to overcome the rapidly changing data and handle the vast amount of complex unstructured data.

Two extensive services helping us today are Mongo DB and AWS DynamoDB. Both are No-SQL databases with similar capabilities, and their advantages like MongoDB is platform-agnostic, but AWS DynamoDB is a managed AWS service. On the other side, MongoDB is limited to the infrastructure it is hosted on. In contrast, AWS DynamoDB is highly scalable, provides high throughput with low latency, and supports global tables.

Some of the key terms which are similar but differently named are shown below.

If you are seeking to improve your data handling capability you might be interested in migrating from MongoDB to AWS DynamoDB to enjoy a highly scalable managed infrastructure.

Today we will be using an AWS service known as AWS Data Migration Service to migrate the data.

AWS Database Migration Service is beneficial for migration from either on-premises, EC2 instance, Standalone servers, or AWS RDS to an AWS backed Database service with almost negligible downtime.

I have used a ubuntu 18.04 OS server to deploy my source on-premises MongoDB Database. I assume you have some data in your collection in the MongoDB database node, which is now ready to be sent to Amazon DynamoDB.

AWS DMS offers two modes for migration

We will be using the ‘table’ mode for the migration

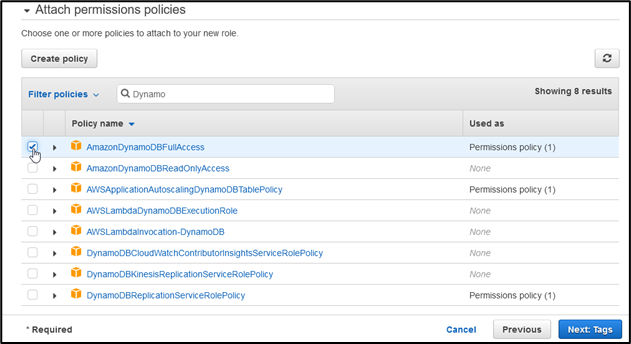

These permissions are required for the Replication server to access the DB

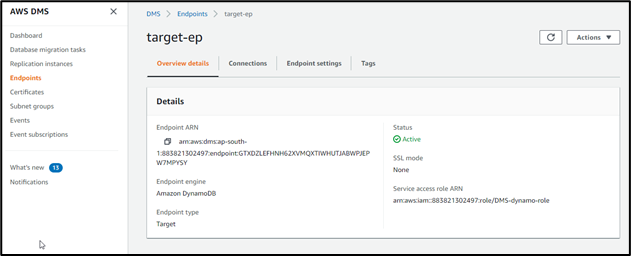

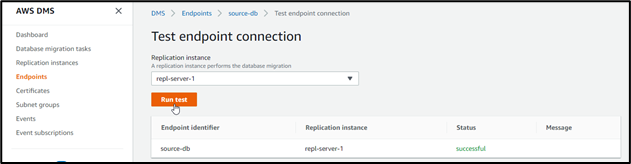

These endpoints will be required for the DMS task to transfer the data from source DB to DynamoDB

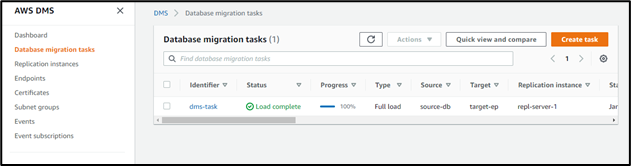

You can now go to DynamoDB in the AWS console and see your tables created

Migrating your No-SQL data to AWS DynamoDB is an up-gradation of infrastructure that will help provide single-digit millisecond performance. In addition, your data is now globally available for better throughput, which also supports encryption of your data at rest. For your CRUD operations, you can also follow up AWS documentation to merge your current application with AWS DynamoDB using APIs, SDKs, etc.

Big enterprises like Disney, Zoom, Dropbox are taking advantage of the seamless experience provided by AWS DynamoDB.

We at CloudThat, are AWS (Amazon Web Services) Advanced Consulting Partner and Training partner and Microsoft gold partner, helping people to develop knowledge on cloud and help their businesses to aim for higher goals using best in industry cloud computing practices and knowledge.

Feel free to drop a comment or any queries that you have regarding AWS services, cloud and data migration, consulting and we will get back to you quickly. To get started, go through our Expert Advisory page and Managed Services Package that is CloudThat‘s offerings.

|

Voiced by Amazon Polly |

Our support doesn't end here. We have monthly newsletters, study guides, practice questions, and more to assist you in upgrading your cloud career. Subscribe to get them all!

Hitesh

Apr 16, 2022

Well explained.