|

Voiced by Amazon Polly |

Introduction

Modern digital platforms rely heavily on APIs to expose services to mobile apps, web applications, and third-party integrations. However, as organizations scale, APIs become one of the most targeted entry points for abuse, attacks, and unexpected traffic spikes. Traditional infrastructure often struggles to handle sudden demand or malicious traffic patterns.

This blog explores the architecture, security considerations, and performance strategies for designing a secure, scalable serverless API platform.

Pioneers in Cloud Consulting & Migration Services

- Reduced infrastructural costs

- Accelerated application deployment

Problem Context

APIs today face several challenges:

- Traffic spikes from viral applications or marketing events

- Malicious bot traffic and DDoS attempts

- Credential stuffing and API abuse

- Performance degradation during peak loads

- Limited visibility into API performance and failures

Traditional server-based architectures often struggle to scale dynamically and require significant operational effort. A serverless architecture eliminates infrastructure management while providing built-in scalability and high availability.

The goal is to build a platform that:

- Scales automatically

- Protects APIs from abuse and malicious traffic

- Provides deep observability for troubleshooting and optimization

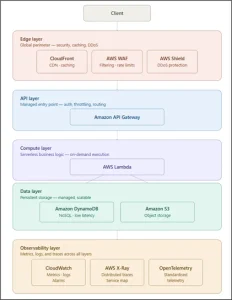

Architecture of a Secure Serverless API Platform

A typical secure API platform on AWS consists of multiple layers of protection and scalability.

High-Level Architecture

Core services involved:

- Amazon CloudFront – edge caching and global distribution

- AWS WAF – protection against malicious traffic

- Amazon API Gateway – managed API entry point

- AWS Lambda – serverless compute layer

- Amazon DynamoDB – scalable NoSQL backend

- Amazon CloudWatch and AWS X-Ray – monitoring and tracing

This layered approach ensures that security and performance controls are applied before requests reach backend services.

Authentication Models for Secure APIs

Authentication is the first line of defense for an API platform. Different consumers require different authentication mechanisms.

1. User Authentication (OAuth / JWT)

For web and mobile applications, authentication can be implemented using Amazon Cognito. Amazon Cognito issues JWT tokens that Amazon API Gateway validates before the request reaches the backend.

This model supports:

- OAuth2 and OpenID Connect

- Multi-factor authentication

- Social identity providers

- Security as Code for Infrastructure and Kubernetes

For internal microservices or backend systems, IAM-based authentication using signed requests (SigV4) provides strong identity verification without exposing credentials.

This ensures that only trusted services can access internal APIs.

- Custom Authorization

For advanced scenarios like multi-tenant SaaS platforms, a Lambda-based authorizer can validate custom tokens, enforce tenant isolation, and apply role-based access control.

Throttling Strategies to Prevent API Abuse

Even authenticated users can, intentionally or accidentally, overwhelm APIs. Therefore, rate limiting and throttling mechanisms are essential.

Edge-Level Protection

Using AWS WAF, organizations can block excessive requests from suspicious IPs or bots.

Example:

- Block IP addresses sending more than 2000 requests in 5 minutes.

API Gateway Throttling

Amazon API Gateway provides built-in throttling using rate limits and burst limits.

Example configuration:

- Rate limit: 1000 requests per second

- Burst limit: 2000 requests

This protects backend services from sudden traffic spikes.

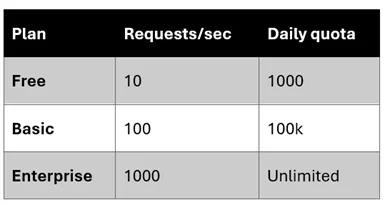

Usage Plans for API Consumers

For public APIs, usage plans allow different rate limits for different customers.

Example tiers:

This prevents noisy neighbors from consuming excessive resources.

Cold Start Mitigation in Serverless APIs

Serverless environments sometimes experience cold starts, where a new compute instance must be initialized before processing requests.

This can increase latency for infrequently used APIs.

Strategies to reduce cold start impact include:

Provisioned Concurrency

Using provisioned concurrency in AWS Lambda ensures that several pre-initialized execution environments are always available.

Lightweight Runtime Selection

Languages like Node.js or Python generally start faster than heavier runtimes.

Function Optimization

Reducing dependency size and avoiding unnecessary initialization can significantly reduce cold start times.

Observability and Monitoring

Deep observability is critical for operating large-scale API platforms.

Metrics Monitoring

Amazon CloudWatch provides metrics such as:

- API latency

- request count

- 4xx and 5xx error rates

- throttling metrics

These metrics enable teams to detect traffic anomalies quickly.

Distributed Tracing

AWS X-Ray enables tracing across services.

A single API request can be traced across:

Amazon API Gateway → AWS Lambda → Database

This helps identify latency bottlenecks and service failures.

Advanced Observability

Integrating OpenTelemetry enables exporting traces and metrics to tools such as Prometheus or Grafana for deeper analysis.

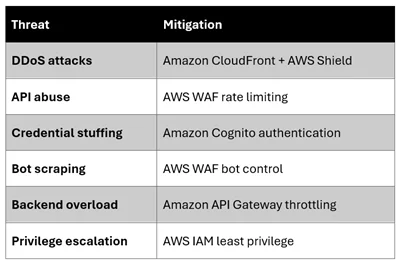

Security Threat Analysis

A secure API platform must defend against multiple threat vectors.

Using multiple security layers ensures that attacks are stopped before reaching backend services.

Conclusion

A secure serverless API platform on AWS provides a powerful combination of automatic scalability, strong security controls, and deep observability. By leveraging managed services such as Amazon API Gateway, AWS Lambda, and Amazon CloudFront, organizations can focus on delivering business value rather than managing infrastructure.

However, designing such a platform requires careful consideration of authentication models, traffic throttling strategies, cold start optimization, and comprehensive monitoring.

When implemented correctly, this architecture enables organizations to build resilient APIs that can handle massive traffic while maintaining strong protection against abuse and cyber threats.

Drop a query if you have any questions regarding serverless API platform and we will get back to you quickly.

Empowering organizations to become ‘data driven’ enterprises with our Cloud experts.

- Reduced infrastructure costs

- Timely data-driven decisions

About CloudThat

FAQs

1. How does Amazon API Gateway help protect backend services from traffic spikes?

ANS: – Amazon API Gateway includes built-in rate limiting and throttling mechanisms. These allow administrators to define limits such as requests per second and burst limits. If the incoming request rate exceeds these limits, Amazon API Gateway returns an HTTP 429 (Too Many Requests) response, preventing excessive traffic from reaching backend services like Amazon DynamoDB or AWS Lambda.

2. What security mechanisms can be implemented to protect serverless APIs from abuse?

ANS: – A layered security model is recommended. Edge protection using Amazon CloudFront combined with filtering rules from AWS WAF can block malicious traffic before it reaches the API. Authentication can be handled through Amazon Cognito using OAuth2 or JWT tokens. Additionally, Amazon API Gateway usage plans and throttling policies help limit excessive usage by individual clients.

3. How can cold start latency be minimized in serverless APIs?

ANS: – Cold starts occur when a new compute instance must be initialized before processing a request. This can be mitigated by using AWS Lambda provisioned concurrency, optimizing function code to reduce initialization time, and selecting lightweight runtimes. In some scenarios, scheduled invocations can also keep functions warm, reducing latency.

WRITTEN BY Aishwarya M

Aishwarya M works as a Cloud Solutions Architect – DevOps & Kubernetes at CloudThat. She is a proficient DevOps professional with expertise in designing scalable, secure, and automated infrastructure solutions across multi-cloud environments. Aishwarya specializes in leveraging tools like Kubernetes, Terraform, CI/CD pipelines, and monitoring stacks to streamline software delivery and ensure high system availability. She has a deep understanding of cloud-native architectures and focuses on delivering efficient, reliable, and maintainable solutions. Outside of work, Aishwarya enjoys traveling and cooking, exploring new places and cuisines while staying updated with the latest trends in cloud and DevOps technologies.

Login

Login

March 23, 2026

March 23, 2026 PREV

PREV

Comments