Introduction to Prometheus:

Prometheus is an open-source monitoring and alerting tool used to monitor the resource utilization and health of resources running on-premises and on various cloud platforms. Conceptualized and built at SoundCloud, Prometheus has been in the market since early 2012. Many organizations have integrated Prometheus into their environment due to its ease of use and cost. It is constantly being updated/upgraded by a robust community of developers and a vast user base. In 2016, Prometheus joined the CNCF community, which also governs Kubernetes.

Metrics are essential in understanding the resource utilization by host machines and applications, which help businesses do the right Capacity Planning, reduce user latency, avoid downtime of their applications, and design highly available & load-balanced infrastructure. Time Series metrics allow measuring various resource consumption like CPU, Memory, R/W, IOPS, and Network in & out traffic over periodic intervals. Numerous applications offer to monitor the “Time Series” data; Prometheus is at the forefront of these applications.

a) Features of Prometheus

- Prometheus comes with its language called “PromQL” to query the metrics it scrapes from various sources.

- Metrics collected by Prometheus are name-based or as key-value pairs (labels)

- Setting up a Prometheus metrics server is very simple; it can be run as a single node or can be run as federated servers.

- Target endpoints can be added to Prometheus via Service Discovery, using node exporters, through static configuration, or client libraries

- Once Prometheus has been set up on a server that scrapes data from backend targets, the collected metrics can be visualized on “Expression Browser”.

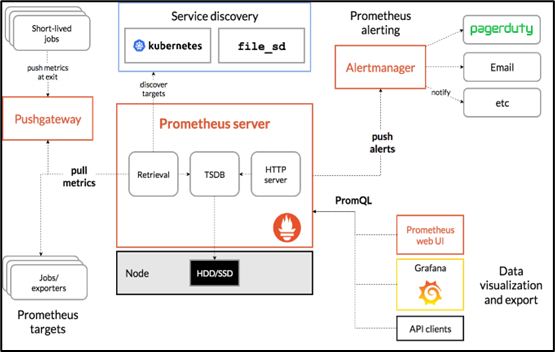

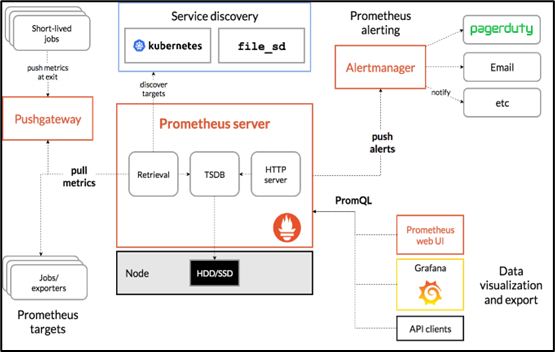

b) Prometheus Architecture

Prometheus mostly works through a Pull-based model of gathering data exposed via HTTP endpoints of the targets. A push-based data gathering can be achieved through a Push-gateway setup. Prometheus architecture can be divided into five components.

- The central Prometheus Server is the heart of the monitoring system.

- Targets: which are the producers of metrics, the metric data on them are exposed to the Prometheus Server via Client Libraries. (To access application code-related metrics available in multiple supported languages. These libraries format the metrics in Prometheus readable form), Exporters (are used when the application source code is not accessible, they live alongside the application. Ex: Database Exporter, Node Exporter for host stats, HaProxy Exporter for storage, messaging & HTTP).

- Service Discovery: a mechanism for discovering services like EC2 instances and K8S on the cloud and scraping data from them.

- Storage: Prometheus persists the data on the local host or in a cloud environment; an external persistent storage volume can be used.

- Alerting & Visualizing: The expression browser is a web-based UI where these metrics can be visualized in a central location. Using the Alertmanager component of Prometheus, alerts can be set and managed.

Introduction to Grafana:

Since its inception in 2014, Grafana has become the de-facto open-source Alerting and Visualization tool for organizations. The main feature of Grafana that makes it stand out is that the data source for Grafana can reside anywhere and everything can be gathered and visualized on a single dashboard. Grafana offers a wide variety of options for ready templates based on the tools or services being used and the panels on each of the templates are also customizable. These dynamic dashboards can be shared with team members for collaborative visualization and can be securely accessed by an admin user using passwords or security tokens.

Grafana can easily integrate with many third-party tools like Graphite, Prometheus, Influx DB, ElasticSearch, MySQL, and PostgreSQL using plugins. Grafana can be accessed on a web browser. Grafana provides access to real-time streaming data from various sources and this data can be visualized using charts, bar/line graphs, histograms, and pie charts. Using the same query language of the source, Grafana collects data sets from the source for visualization.

- AWS account with Admin Permissions

- IAM Roles

- VPC, Subnets, Security Groups (Egress permissions)

- EKS Cluster

- Managed Node Group (Linux)

- AWS CLI

- eksctl

- kubectl

- helm

- metrics server

- Prometheus

- Grafana

Set-up on AWS before Installing Prometheus:

On AWS sign-in using non-root account User credentials with “AdministratorAccess” to create IAM roles & policies for accessing & managing other AWS resources.

a) IAM Roles to be created:

On the IAM service page, create the following roles for EKS Cluster and Node groups to access other AWS resources/services. The “Trusted Entity Type” needs to be “AWS service”. Under “Use Case”, type “EKS” and create the below roles:

- For EKS Cluster: select “EKS-Cluster” as the “Use Case”. Give a unique name “EksClusterRole” and create the role. The “AmazonEKSClusterPolicy” policy gets attached to this role.

- For Managed Node Group: 3 sets of Permissions need to be assigned. Here the “Use Case” is “EC2”.

- AmazonEKSWorkerNodePolicy

- AmazonEC2ContainerRegistryReadOnly

- AmazonEKS_CNI_Policy

Give the role a unique name: “AmazonEKSNodeRole” and create the role.

Apart from the above policies, many other policies get created while creating the Managed Node Group and installing other Tools like Helm, Prometheus & Grafana, which AWS automatically creates for us.

b) Creating VPC, Subnet & Security Groups:

Default VPC, Subnet, and Security Groups can be used while creating an EKS Cluster. However, as security best practice, it is always good to create custom VPC, Subnet & SG’s with specific IP ranges, Ingress & Egress. With custom VPC, Internet Gateway and Route table need to be created and attached to the VPC.

c) Creating EKS Cluster:

- Give a unique name to cluster, “eks-prometheus-cls”

- Let the version be set to default 1.22.

- Select the “EksClusterRole” created earlier.

- Leave all other options to default. Tag the resource as per your business use case.

- Select the default/custom VPC, Subnet (atleast 2 subnets are needed) and Security Group from the drop-down.

- Specify IP range for your Kubernetes services (Optional)

- Set the “Cluster Endpoint access” to “Public”

- Leave the VPC-CNI, CoreDNS & kube-proxy to default versions.

- Leave all the Control-plane logging options turned-off.

- Review & Create the EKS Cluster.

d) Adding Managed Linux Node Group to EKS Cluster:

Once the EKS cluster is created and shows as “Active”, We can now add Managed node groups (worker nodes) to the cluster.

- Under Eks Cluster “Compute” section, Click on “Create a Node Group”

- Give a unique name to the node, “eks-prometheus-nodegroup”.

- Select “Node IAM role” that was created earlier “EksNodeRole”

- Leave all the other settings as it is and click next.

- Under the “Node group compute configuration”, select”

- AMI Type: Amazon Linux 2

- Capacity: On-demand

- Instance types: t3.medium

- Disk size: 20 GiB

- Under the “Node group scaling configuration”:

- Set the minimum, maximum & desired size to 2

- Under the “Node group update configuration”, select:

- Maximum unavailable: Number

- Value: 1 node

- Select the Subnets where the node will run.

- Configure SSH access to the worker nodes (optional)

- Review and Create the Managed Node group.

e) Installing aws cli:

- curl “https://awscli.amazonaws.com/awscli-exe-linux-x86_64.zip” -o “awscliv2.zip”

- unzip awscliv2.zip

- sudo ./aws/install

- ./aws/install -i /usr/local/aws-cli -b /usr/local/bin –update

- aws –version

f) Installing kubectl:

- curl -o kubectl https://s3.us-west-2.amazonaws.com/amazon-eks/1.22.6/2022-03-09/bin/linux/amd64/kubectlsudo apt-get install unzip

- openssl sha1 -sha256 kubectl

- chmod +x ./kubectl

- mkdir -p $HOME/bin && cp ./kubectl $HOME/bin/kubectl && export PATH=$PATH:$HOME/binaws –version

- echo ‘export PATH=$PATH:$HOME/bin’ >> ~/.bashrc

- kubectl version –short –client

g) Installing helm:

- curl https://raw.githubusercontent.com/helm/helm/master/scripts/get-helm-3 > get_helm.sh

- chmod 700 get_helm.sh

- ./get_helm.sh

- helm help

h) Connect to AWS, Set Context for EKS, Get svc, nodes, pods:

- aws sts get-caller-identity

- aws eks update-kubeconfig –region ap-south-1 –name eks-prometheus-cls

- kubectl get svc

- kubectl get nodes -o wide

- kubectl get pods -n kube-system

Installing Metrics Server on EKS:

Metrics Server needs to be installed before installing Prometheus. The raw metrics can be accessed at /metrics.

Installing & Setting up Prometheus using Helm:

Helm repo needs to be updated and Prometheus needs to be installed in the Prometheus namespace using the below commands.

- kubectl create namespace prometheus

- helm repo add prometheus-community https://prometheus-community.github.io/helm-charts

- helm upgrade -i prometheus prometheus-community/prometheus –namespace prometheus –set alertmanager.persistentVolume.storageClass=”gp2″,server.persistentVolume.storageClass=”gp2″

- kubectl get pods -n Prometheus

- kubectl –namespace=prometheus port-forward deploy/prometheus-server 9090 (this creates port forwarding for Prometheus where the metrices can be accessed on http://localhost:9090/graph

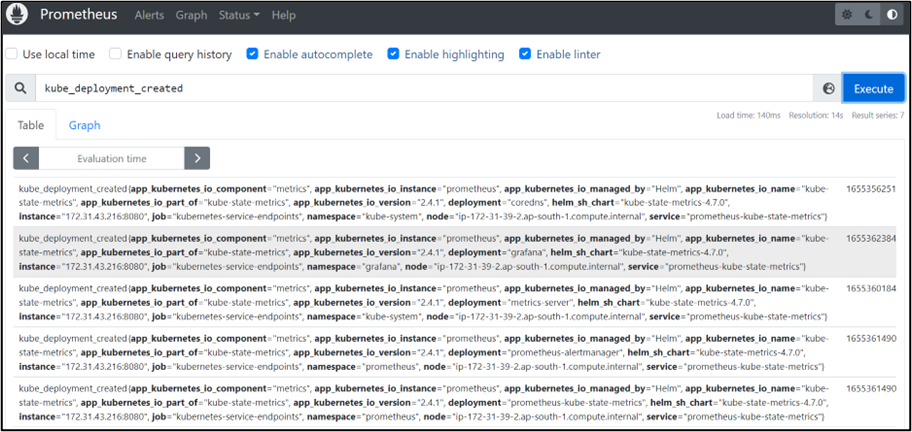

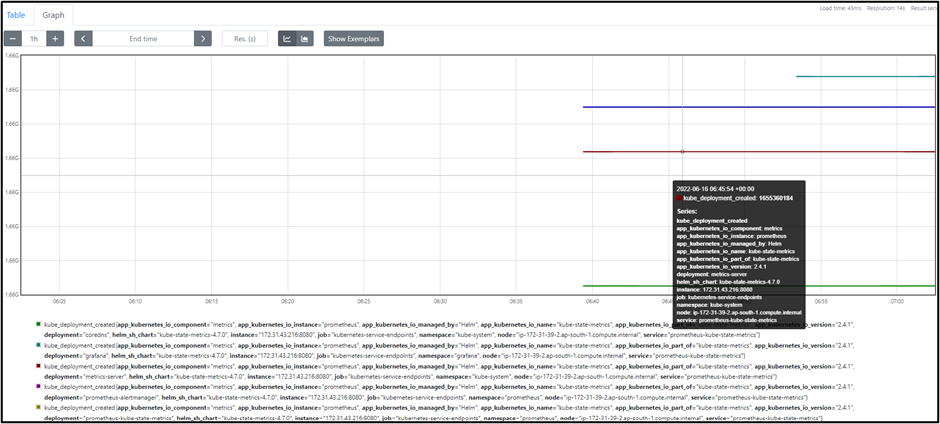

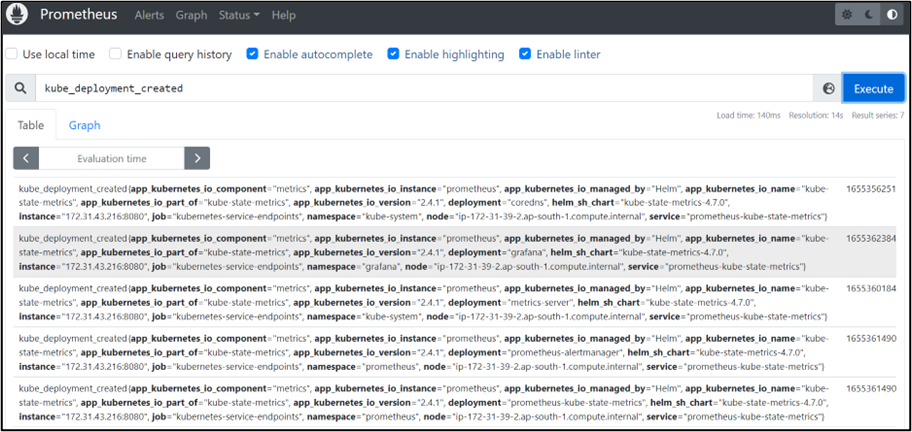

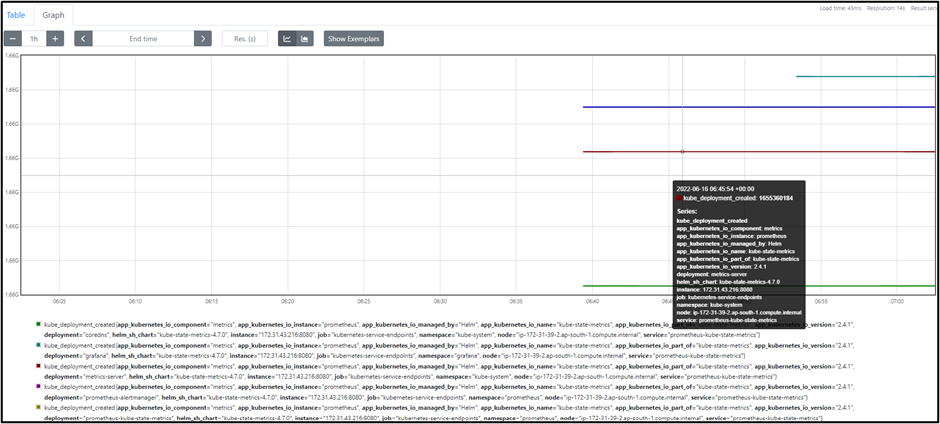

- There are hundreds of gauges, and counters that can be selected from the drop-down and executed to view the raw metrics being scraped from the Target EKS nodes. The metrics can also be visualized using the /graph option.

- Some example metrices are listed below:

Ex: node_disk_info

node_disk_read_bytes_total

node_disk_write_bytes_total

kube_pod_status_ready

kube_daemonset_created

Below are some images from the Prometheus UI dashboard:

- The list of metrics for kube and node can be viewed by typing just a few words in the search bar and executing to view the raw metrics.

- The raw metrics or the graphs for the scraped data can be viewed.

Installing & Setting up Grafana:

- kubectl create namespace Grafana

- helm repo add grafana

- helm repo update

- helm install grafana grafana/grafana –namespace grafana –set persistence.storageClassName=”gp2″ –set persistence.enabled=true –set adminPassword=’EKS!sAWSome’ –values ${HOME}/environment/grafana/grafana.yaml –set service.type=LoadBalancerkubectl get –raw /metrics

- kubectl get all -n Grafana

- export ELB=$(kubectl get svc -n grafana grafana -o jsonpath='{.status.loadBalancer.ingress[0].hostname}’)

- echo

- kubectl get secret –namespace grafana grafana -o jsonpath=”{.data.admin-password}” | base64 –decode ; echo

- The above command gives an output of the Password for logging into the Grafana dashboard.

Integrating Prometheus with Grafana:

- Once Grafana is installed on the EKS cluster, it can be accessed using the AWS classic load balancer is a URL link that comes as output after running the command: echo

- The username is “admin” and the password is from the earlier kubectl command.

Monitoring & Visualizing using Grafana:

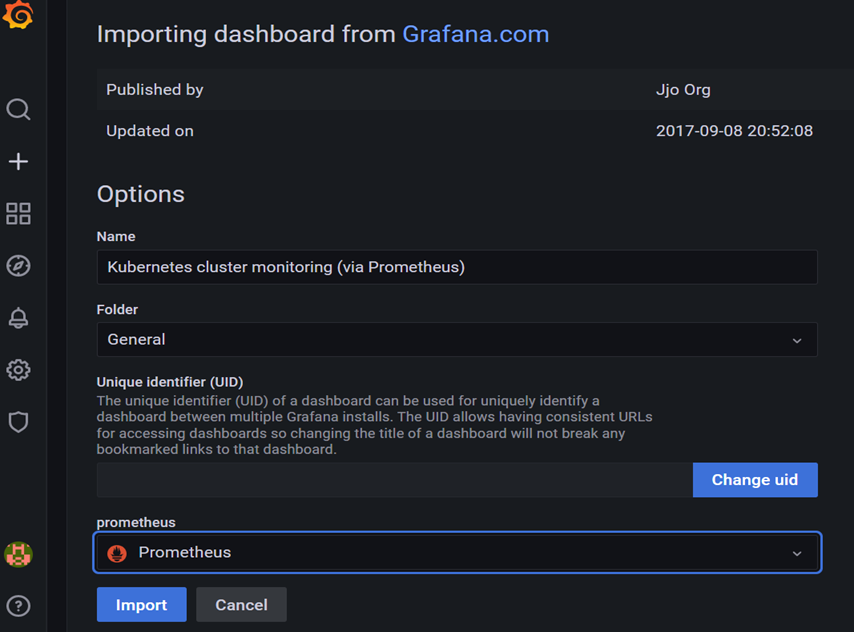

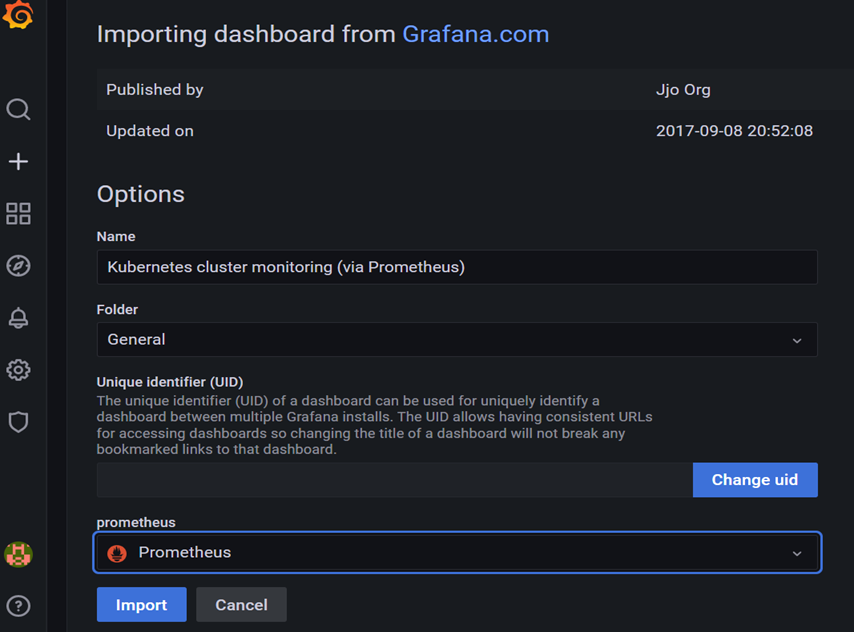

- On the homepage, click on “+” sign to expand the options. Click on “Import” to import the dashboard template for Kubernetes.

- Type “3119” (template number) to load the desired dashboard template with various panels from the Grafana.com website.

- Once the dashboard details load up, select Prometheus from the drop-down and click on “Import.”

- Once the Import is successful, the Time Series metrics being scraped from the Prometheus endpoints start showing up on the dashboard in Piecharts, line graphs, and bar graphs.

- You can select the data source as Prometheus and then the node from which you want to visualize the metrics.

About CloudThat

CloudThat is the official AWS (Amazon Web Services) Advanced Consulting Partner, Microsoft Gold Partner, Google Cloud Partner, and Training Partner helping people develop knowledge of the cloud and help their businesses aim for higher goals using best-in-industry cloud computing practices and expertise. We are on a mission to build a robust cloud computing ecosystem by disseminating knowledge on technological intricacies within the cloud space. Our blogs, webinars, case studies, and white papers enable all the stakeholders in the cloud computing sphere.

If you have any queries about the AWS EKS cluster, Prometheus and Grafana, or any other services, drop them in the comment section and I will get back to you quickly.

CloudThat is a house of All-Encompassing IT Services on the cloud offering Multi-cloud Security & Compliance, Cloud Enablement Services, Cloud-Native Application Development, and System Integration Services. Explore our consulting here.

WRITTEN BY Saritha Nagaraju

Saritha is a Subject Matter Expert - Kubernetes working as a lead at CloudThat. She has relentlessly kept upskilling with the latest trending technologies and delivering the best cloud-native solutions to Businesses in all sectors. She is a Microsoft Certified Solution Architect, Certified Windows Server Administrator, and Windows Networking professional and strives towards providing the best cloud experience to our customers through transparent communication, methodical approach, and diligence.

Pavan

Jul 11, 2022

All the best!